The Digital Marketing Bible (L-Z)

Everything You Ever Wanted to Know About Digital Marketing (But Were Afraid to Ask!)

Let’s not beat around the bush, digital marketing is complicated! Yes, sure, it’s easy to understand at first blush, but when it comes to making it work for you, it proves oh-so-hard to implement. How many times has your SEO company obfuscated with technobabble about topic A or topic B, only to leave you more confused at the end of the call than at the beginning of it!

Well, luckily for you, we here at SEO North Sydney & Web Design appreciate that a business owner’s time is VALUABLE. Which is why we’ve created the Digital Marketing Bible for you. Think of it as a lexicon. An A-Z of EVERYTHING related to the digital marketing industry. All the helpful content presented is factually correct and 100% accurate. Plus, answers are deliberately kept short, sharp, and to the point. Because you’re a busy person with a business to run, and you just want the FACTS, not the technobabble.

So, if you’ve got a question about something to do with digital marketing, simply type the topic into the search box below (ie. ‘AdWords,’ or ‘CHATGPT’ or ‘Schema,’ etc.), and you will be instantly transported to the answer you need. Alternatively, you can simply scroll down the page and devour all the information presented like a savant at an all you can eat knowledge buffet! From ‘A.I’ to ‘Zesty.io’ and every topic in-between, the Digital Marketing Bible is your one-stop-shop for everything you ever wanted to know about digital marketing but were afraid to ask!

LARGE LANGUAGE MODEL (LLMS)

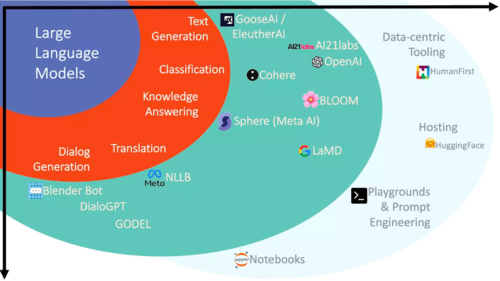

Large Language Models (LLMs) represent a significant leap in natural language processing, enabling machines to understand, generate, and manipulate human language at an unprecedented scale and complexity. These models, like OpenAI’s GPT series or Google’s BERT, are built upon neural network architectures with millions or even billions of parameters, allowing them to process and comprehend text in a manner that mirrors human language understanding. LLMs learn from vast amounts of text data, capturing patterns, semantics, and contextual relationships to generate coherent and contextually relevant text.

The impact of LLMs spans across various applications, from improving language translation and content generation to aiding in complex problem-solving and assisting with information retrieval tasks. These models excel at tasks like language generation, summarization, sentiment analysis, and question-answering, continually improving their capabilities through continuous learning and fine-tuning. Their ability to understand context, nuances, and language subtleties allows them to generate human-like text, enabling advancements in chatbots, content creation, and even aiding in scientific research by sifting through vast amounts of textual data to extract meaningful insights.

However, alongside their advancements and benefits, LLMs also raise ethical considerations regarding biases in language, privacy concerns related to data usage, and the potential societal impact of AI-generated content. As these models continue to evolve and grow in complexity, responsible development and usage become crucial to ensure that the benefits of LLMs are harnessed while mitigating potential risks and challenges associated with their deployment in various domains.

LAMBDA

Lambda is a next-generation artificial intelligence model developed by OpenAI, introduced in 2022. Representing a significant advancement in AI technology, Lambda is notable for its unprecedented scale, with 530 billion parameters, making it one of the largest language models ever created. Lambda builds upon the success of previous models like GPT-3, offering enhanced capabilities in natural language understanding, generation, and reasoning. With its immense size and complexity, Lambda demonstrates remarkable proficiency across a wide range of language tasks, including text completion, translation, summarization, and question answering. Moreover, Lambda exhibits a nuanced understanding of context, enabling it to generate coherent and contextually relevant responses even in complex scenarios. As AI continues to evolve, Lambda sets a new standard for language models, paving the way for future advancements in natural language processing and AI-driven applications.

LANDING PAGE

When you click on a search result link, the page you first arrive at is known as a landing page. For a webmaster, the main question is: Do you need an SEO-focused or conversion-focused landing page, or perhaps a combination of the two?

SEO-focused pages require the usual combination of keyword-rich but interesting and informative text that is good to read, whereas that isn’t so important for conversion-focused pages. The usual formula for SEO applies to landing pages in the same way as web pages. A strong landing page should offer users what they expect, answer their queries and feature a prominent call-to-action. And don’t forget, your landing page must also be optimised for tablets and mobile phones.

LATENT SEMANTIC INDEXING (LSI)

This is an easier concept to grasp than it is to say! As far as search engines are concerned, single words in isolation don’t carry as much SEO weight as key-phrases. Latent semantic indexing aims to establish a searcher’s actual intent and the contextual meaning of words, in order to improve the accuracy and relevancy of results. It does this using a complex mathematical formula and matrix, which compare how often groups of words appear in a single document and in all the Google indexes.

Fortunately, there are a number of free, online generators to make the task of choosing LSI keywords easier for you. Simply type in your subject and they will reveal a lengthy list of associated keywords and phrases. Another smart and free way to do this is to type your keyword into Google then scroll down to the bottom of the page, where you’ll find a handy list of eight search suggestions related to your enquiry.

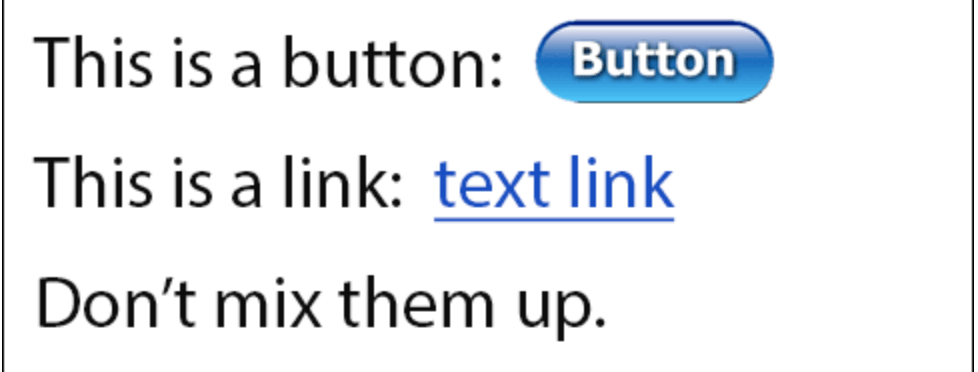

LINKS

A link on a webpage is clickable text that redirects the user to another website or an internal page of the same site (see BACKLINKS and HYPERLINKS).

LINKBAIT

Because boasting plenty of high-quality inbound links on your website is a contributory factor to page rank, web developers have developed smart tactics to attract them, known as linkbait. The primary aim is to create compelling content that other sites simply must link to. Done right, the page could go viral and cause an influx of sites wanting to link to it – great for your page rank!

You need to get creative and brainstorm with your team to come up with striking ideas for online articles, breaking news, arresting images (infographics and photos), fun quizzes, lists or anything else that’s likely to grab people’s attention. They need to be fresh and new and, ideally, create a stir in the online community and get bloggers blogging about them – that’s when memes spread exponentially

One inadvisable and irritating linkbait practice is the increasingly common one of creating alluring but misleading titles. Users arrive on the landing page only to discover largely irrelevant information. Avoid this at all costs. You must create value to attract high-quality links.

LINK-BUILDING

The practice of actively attracting inbound links to your website (see BACKLINKS and HYPERLINKS).

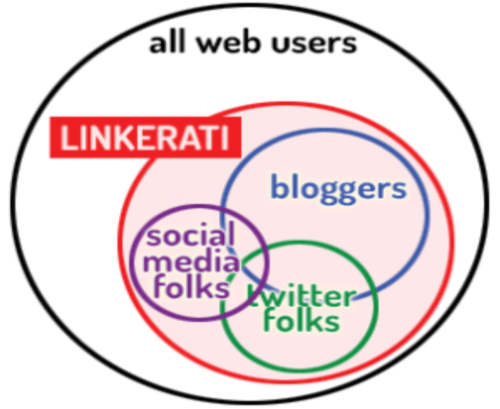

LINKERATI

The linkerati are the key people of influence and primary targets of linkbait. They include movers-and-shakers from the world of online news, social media, journalism, blogging, content creation, reviewing, forums and chatrooms. Turn linkerati heads and you may well generate a tsunami of links that can circle the globe.

LINK EXCHANGE

Link exchanges are essentially confederations of websites whose operators believe that together they are stronger and more visible on the worldwide web. When a webmaster registers a site with a link exchange, they receive HTML code that installs banner advertisements for other members of the exchange that will be displayed on their site. The webmaster then needs to create their own ad, so it can be circulated and embedded throughout the group. Advantages of link exchanges include attracting a targeted audience to sites that display similar topics or themes. They are also a relatively stable method of encouraging backlinking.

All good then? Well, no, because the link exchange is yet another internet phenomenon that rubs prickly Google up the wrong way and could result in a plummeting in page rank. Rival banner ads may also tempt users away from sites, before they’ve explored beyond the landing page.

LINK FARM

A link farm is a group of websites connect to one another other via hyperlink, with the aim of growing their link portfolios and ascending the search engine rankings. However, this is deemed a black hat technique, since the ultimate aim is to build high numbers of links but with scant regard for quality or relevance. Google’s algorithms are fine-tuned to hunt down and punish sites associated with link farming. That means page rank penalties could well result.

LINK JUICE

You can think of link juice as votes of confidence in a web page, cast by way of internal or external links to other pages. Although you won’t hear Google officially mention the term, it is a major factor in gaining high page rank.

It’s not just about numbers though. Link juice is far more effective when the pages it flows from are relevant to the subject, possess high page rank and authority, contain high quality content, appear high in SERPs, have user-generated content and, preferably, are referred to frequently in the various social media channels.

On the flipside, links are less effective when they are irrelevant and unnatural e.g. a coffee shop in Sydney linked to a car rental office in Los Angeles). Other inferior links are those that are paid for or derived from link farms, link exchanges and lowly ranked pages. They tend to be spammy and packed with uninformative, poorly-written content. Avoid at all costs!

LINK LOVE

Any outbound link that confers trust and authority to another web page for the purposes of elevated page rank. All websites benefit from being smothered in link love. Relevant links to other sites also improve the user experience.

LINK PARTNER

A reciprocal agreement between two sites who link to each other, as commonly used by link exchanges to manipulate search results. Not something Google and other search engines generally approve of, hence only a low SEO value is placed on such relationships.

LINK POPULARITY

Refers to the total number of backlinks that point to a website, which was historically a good measure of a site’s status. In current times, remember, it’s more about link quality than quantity. No one likes spammy, link-packed sites, least of all search engines.

LINK SPAM (BLOG SPAM, COMMENT SPAM)

Link spam often takes the black hat form of poorly written and designed, fake blogs (splogs) that contain links to websites. Alternatively, it can be in the form of guest posts on otherwise legitimate sites. Either way, the aim is the same – to manipulate SERPs by nefarious means and push websites further up the page by conveying authority via links. Google’s algorithms are constantly updated, in an effort to identify this form of spamming and negate any benefits it may bring.

LINK SPAM UPDATE

The Link Spam Update refers to Google’s ongoing efforts to combat manipulative and spammy link building practices that aim to artificially boost a website’s search engine rankings. Links have long been a crucial factor in Google’s ranking algorithm, serving as a signal of a website’s authority and credibility. However, over time, some websites have attempted to game the system by engaging in link spam tactics, such as buying or exchanging links, using link farms, or participating in manipulative link schemes to artificially inflate their backlink profile.

Google’s Link Spam Update involves continuous algorithmic improvements and updates aimed at identifying and penalising such spammy link building tactics. The focus is on rewarding natural, organic link-building practices that reflect genuine endorsements and references from reputable sources. The update aims to devalue links from low-quality or irrelevant sites while rewarding high-quality, relevant, and contextually meaningful links that genuinely contribute value to the user experience.

This update underscores Google’s commitment to providing users with high-quality and relevant search results, deterring deceptive SEO practices that aim to manipulate rankings. It encourages website owners and SEO practitioners to prioritise content quality, relevance, and user-centric approaches to link building, fostering a healthier and more credible online ecosystem. Websites engaging in manipulative link-building practices risk penalties and drops in search rankings, emphasising the importance of ethical and natural link acquisition strategies in SEO efforts.

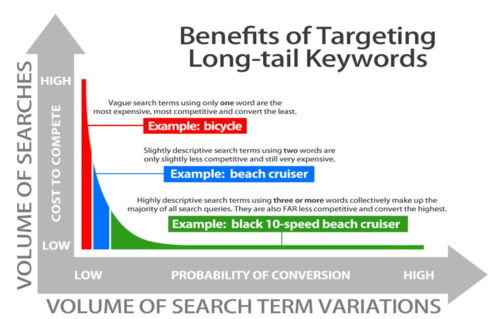

LONG TAIL KEYWORD

Back to our old friend, the keyword. To find the most effective keywords, it’s important to do your research and anticipate which words and phrases potential customers are most likely to type into a search. This is where the long tail keyword or, more accurately speaking, keyphrase comes in.

Here’s an example. If looking for a brand-new vehicle, ‘Ford car’ might throw up a huge number of results but many of those won’t be specific to your needs. ‘Ford cars for sale’, however, will begin to refine the results. ‘New Ford cars for sale in Sydney’ will narrow the field still further.

It’s increasingly tough to stand out in an online market crowded with big players, who are prepared to throw millions of dollars at their SEO strategies. Long tail keywords are a cost-effective way to help you to define your business and create a niche market. You are also more likely to attract genuine prospects rather than surfers who are just curious and passing through. It’s a case of quality site traffic, not quantity. And when it comes to funding pay-per-click campaigns, long tail keywords are likely to be cheaper. Read More…

LINK POPULARITY

Refers to the total number of backlinks that point to a website, which was historically a good measure of a site’s status. In current times, remember, it’s more about link quality than quantity. No one likes spammy, link-packed sites, least of all search engines.

MASHUP

A mashup webpage grabs various forms of content from multiple sources – blogs, news items, reviews, maps, images, video – and combines them with an additional functionality, to present something of added value and interest to users. Smart phone apps (APIs) are currently among the most common platforms for mashups. Twitterspy and WeatherBonk are succesful examples of the form.

MEDIC / CORE UPDATE

The “Medic” or Core Update, first identified in August 2018, is a significant algorithmic update by Google that notably impacted websites in the health and wellness sectors, along with other Your Money Your Life (YMYL) niches. YMYL refers to content that can directly influence a person’s health, finances, safety, or overall well-being. The update aimed to improve the quality and trustworthiness of search results in these sensitive areas, ensuring users receive accurate and reliable information when seeking advice or guidance on important topics.

The Medic/Core Update had a substantial impact on how Google assesses the expertise, authority, and trustworthiness (E-A-T) of content in these niches. Websites providing health-related information were scrutinised more closely in terms of the qualifications of content creators, the credibility of sources, and the overall reliability of the information presented. This update placed a greater emphasis on high-quality, authoritative content backed by expertise and reputable sources, aiming to elevate trustworthy and credible sources in search rankings while demoting content lacking credibility or expertise.

For website owners and content creators in the health, finance, or other YMYL niches, the Medic/Core Update highlighted the importance of producing well-researched, authoritative, and accurate content. It emphasised the need for transparent information sources, expert authorship, and comprehensive content that serves the best interests of users seeking reliable guidance on critical life topics. Adhering to E-A-T principles and providing valuable, trustworthy information became pivotal for maintaining or improving search visibility in these sensitive niches post the Medic/Core Update.

META DESCRIPTION

This is the short description of a website (it can be up to 160 characters) that appears on search engine results pages, immediately below the title tag of the site. Whilst handy for the user as an explanation of what the site contains, meta descriptions have precious little SEO value and it’s not necessary to spend a whole lot of time drafting them. They should, however, be different on every site.

META TAGS

Meta tags include meta descriptions and are of importance to SEO. They should feature in the HTML code embedded in the header of every webpage, and are there to let web crawlers (Googlebots) know exactly what each page contains, which is why they need to be extremely accurate and informative.

The best webmasters know there’s really no need to go overboard with the number of meta tags used, as they just clog up code space. It’s best to opt for the required minimum. They must include: meta content type; title; meta description and viewport.

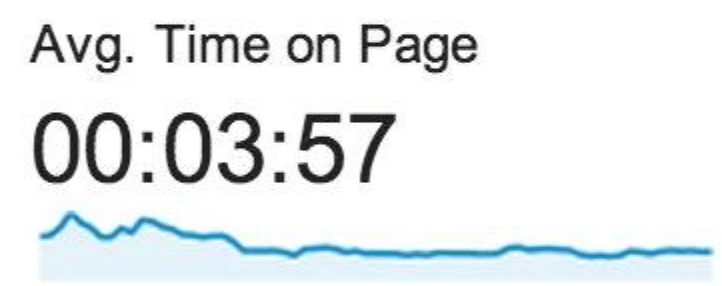

METRICS

Metrics are standards of measurement used in search engine analytics. Metrics that site owners are generally most interested in usually include backlinks, keyword rankings, website traffic, conversions and revenue. It’s worth pointing out that hitting #1 spot in SERPs doesn’t guarantee increased revenue. There are many other factors to consider when building a website that converts traffic into sales.

MFA

‘Made for Advertisements’ – is a term, often used in a derogatory way, to describe websites that have little purpose other than to lure visitors into clicking on ads. They are also sometimes referred to as ‘Made for AdSense’ sites and usually have little or no content other than clickable ads. If there is any content, it’s often low quality and/or scraped from other sites.

MIDJOURNEY

Midjourney is an AI-powered platform that allows you to generate stunning images from just your imagination. Using text prompts, you describe what you want the image to depict, and Midjourney’s sophisticated algorithms translate your words into a unique visual creation. This opens doors for artists, designers, and anyone with a creative spark to explore countless visual ideas and bring them to life, making Midjourney a powerful tool for artistic expression and exploration.

MIRROR SITE

Mirror sites share identical content but have different URL’s (Uniform Resource Locators) i.e. different web addresses. Google despises duplication and usually see mirror sites as plagiaristic, black hat techniques. However, it’s sometimes necessary to create a carbon copy of a popular site, to increase loading speeds, by lessening the burden on its web server. It’s also occasionally used to launch sites in different geographical regions. It’s important to decide which version of your site you need to be visible to Googlebots and visitors, in order to avoid indexing issues and a confusing user experience.

MOBILE FIRST INDEX

The Mobile-First Index represents a fundamental shift in Google’s approach to indexing and ranking websites, prioritising the mobile version of a site over its desktop counterpart for search engine rankings and results. With the increasing dominance of mobile devices in internet usage, Google recognizes the importance of delivering a seamless and optimised experience to mobile users. This shift signifies Google’s commitment to evaluating and ranking websites primarily based on their mobile version’s content, structure, and user experience, rather than focusing on the desktop version.

For website owners and developers, the Mobile-First Index necessitates a strong emphasis on mobile responsiveness, ensuring that their sites are well-designed, fast-loading, and user-friendly across various mobile devices. Optimising for mobile-first involves responsive web design, where the layout and content adapt dynamically to fit different screen sizes, ensuring a consistent and intuitive experience for users on smartphones and tablets. This approach not only caters to the growing mobile user base but also aligns with Google’s ranking criteria, potentially improving a site’s visibility and rankings in mobile search results.

Adapting to the Mobile-First Index means prioritising mobile usability and experience in web design and content creation strategies. It encourages webmasters to focus on factors such as mobile-friendly design, page speed, responsive layouts, and content relevance for mobile users. Embracing mobile-first practices becomes imperative for maintaining or improving search rankings and ensuring a positive user experience, aligning with the evolving landscape of digital consumption driven by mobile devices.

MOBILE SPEED UPDATE

The Mobile Speed Update, introduced by Google in July 2018, marked a significant shift in how page speed impacts mobile search rankings. This update emphasised the importance of website loading speed on mobile devices as a ranking factor in Google’s search algorithm. Google acknowledged the rising prominence of mobile browsing and aimed to enhance user experience by prioritising fast-loading mobile pages in search results. Websites with faster loading speeds on mobile devices were favoured in search rankings, improving their visibility to users searching on smartphones and tablets.

For website owners and developers, the Mobile Speed Update underscored the criticality of optimising their sites for swift loading times on mobile devices. Factors such as minimising server response time, reducing file sizes, leveraging browser caching, and employing efficient coding practices became crucial in improving mobile page speed. Implementing techniques like responsive design and optimising images and resources helped enhance user experience by delivering quicker access to content, reducing bounce rates, and potentially boosting search rankings in mobile search results.

This update acted as a catalyst for webmasters to prioritise mobile site speed as a key aspect of their SEO strategy. Optimising for mobile speed not only aligned with Google’s algorithmic preferences but also catered to the growing number of mobile users, ensuring a smoother and faster browsing experience. Websites that invested in improving mobile page speed witnessed benefits not only in search rankings but also in user engagement and overall site performance on mobile devices.

MONETISE

Whilst many websites simply exist to provide information or display calls to action e.g. phone your company or complete an online order form, others are optimized to independently generate income through the actions of visitors.

The most common method of achieving this is via Google Adsense campaigns (pay per click advertising) whereby you get paid when visitors click on 3rd party ads on your site. Affiliate marketing is another way to make money from a website or blog. Users are encouraged to click on affiliate links and the site owner profits by receiving a cut of any subsequent sale. Commission rates vary considerably but may be as high as 70% in some cases.

Other ways to monetize your website include displaying those annoying but profitable pop-up ads; renting site pace to others; selling e-books or running a full-on e-commerce site to sell your products or services.

MONEY PAGE

This is a webpage specifically designed to make money for the operator through a call to action e.g. completion of an order form, calling an order line, or clicking on a link. A well-designed website will make this page prominent and easy to navigate to.

Natural Language Processing (NLP)

Natural Language Processing (NLP) is a branch of artificial intelligence focused on enabling computers to understand, interpret, and generate human language. It encompasses a variety of techniques including tokenization, part-of-speech tagging, named entity recognition, and sentiment analysis, among others. NLP finds applications in search engines, virtual assistants, chatbots, sentiment analysis, machine translation, and more. By processing and analyzing large amounts of text data, NLP enables automation of tasks, enhances user experiences, uncovers valuable insights, and facilitates language understanding, contributing to advancements across industries and domains.

NATURAL (ORGANIC) SEARCH RESULTS

A webpage that appears in search engine results pages (SERPs) without being sponsored or paid for in some way, is deemed to be there ‘naturally’ or ‘organically’. Natural search results in Google, Yahoo! and Bing generally appear below a list of paid-for adverts at the top of the page, which are often distinguishable by a small ‘Ad’ symbol and/or different colours and fonts. Despite this, research has shown that over a third of users don’t realise that the top results are paid advertisements, although Google has refuted these findings by claiming that most users click on organic listings. It’s simple for users to use download plug-ins and add-ons to block all advertisements, if they prefer.

From an SEO perspective, it may seem like the easy option to simply pay to get to page #1, but it’s best not to abandon good old natural strategies. Plus, today’s online marketers neglect to leverage social media platforms like Facebook, Instagram, Pinterest at their peril. In today’s world, an integrated marketing approach is infinitely more effective. Statistics prove that organic delivers more relevant traffic than paid ads. Organic also produces long-term results. Investing money in paid ads means that traffic will drop off as soon as you stop feeding the meter.

NEAR ME SEARCHES

“Near Me” searches have become a ubiquitous phenomenon in today’s digital landscape, reflecting the shift in user behaviour towards hyper-local and immediate information retrieval. These searches involve users looking for products, services, or information available in their vicinity, often using phrases like “restaurants near me” or “hardware store near me.” The prominence of mobile devices equipped with location-based services has fueled the surge in “Near Me” searches, empowering users to find nearby businesses or points of interest with unprecedented ease and convenience.

For businesses, optimising for “Near Me” searches has become pivotal in capturing local customers and enhancing their online visibility. This involves local SEO strategies aimed at ensuring that a business appears prominently in search results when users look for services or products in their vicinity. Factors such as having an accurate and updated Google My Business listing, incorporating location-specific keywords in content, and garnering positive reviews and ratings play a significant role in ranking higher in “Near Me” searches.

The prevalence of “Near Me” searches underscores the importance of local relevance and proximity in the user’s decision-making process. It signifies the growing reliance on digital platforms for immediate and location-specific information, presenting businesses with opportunities to leverage local SEO strategies and provide seamless experiences to users searching for nearby solutions or services. Embracing and optimising for “Near Me” searches has become a crucial aspect of a comprehensive digital marketing strategy, especially for businesses targeting local audiences.

NOFOLLOW LINKS

Nofollow tags (attributes) can be inserted into a website’s HTML code, to stop search engines counting outbound links as endorsements or “votes” in favour of other webpages, which could help them to rank higher in SERPs. If Google suspects you’re selling or trading links, your site could well suffer penalties.

Historically, the nofollow attribute dates all the way back to 2005, and was a cooperative response by Google, Yahoo! Microsoft and major blogging platforms to the explosion of black hat, comment spam in blogs. Up to that point, anyone could drop links into their blog posts, as a crafty way to generate link juice. Nofollow tags tell webcrawlers to ignore specific outgoing links, which means PageRank won’t be transferred.

NOINDEX

Whilst the usual aim of SEO is to attract spiders, ‘noindex’ is a meta tag added to a webpage’s HTML code that commands search engine webcrawlers not to index that page. The code is inserted at the head of a webpage and can be programmed to prevent all webcrawlers or just specific ones, like Google’s, to ignore it. There are many reasons why a webmaster might want to prevent indexing, including privacy or duplication issues, or blocking pages that are only temporary. It could also be a special promotions page that you only want subscribers to access.

Some search engines interpret noindex tags differently, meaning your page may still be indexed by them. If pages still appear in Google, it could also be that they’ve not been crawled since the tags were added. The ‘Fetch as Google’ tool, found on the company’s support site, is useful for debugging such crawl issues. Another reason could be that a ‘robots.txt’ file may be conflicting with your noindex tags.

Accidental or malicious installation of noindex tags would be disastrous for a website’s SEO and potential revenue, so it’s highly recommended that a monitoring system is put in place to alert webmasters of any change in the head section of HTML.

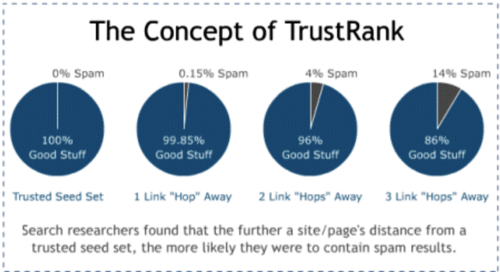

NON-RECIPROCAL LINK

If a website links to a third-party site which site fails to link back to it, it’s known as a non-reciprocal link. When it comes to SEO impact, search engines tend to give more weight to non-reciprocal links, because they are less likely to indicate collusion between sites for the purpose of improving page rank. A relatively large number of inbound non-reciprocal links tells spiders that your site contains valuable information.

OFF-PAGE SEO

A successful SEO strategy needs to include both on-page and off-page methods. Off-page SEO refers to a site’s ‘domain authority’, which works on a 100-point scale (100 being the highest) introduced by SEO resource company, Moz, and now used as a marker throughout the industry. A high score is determined by the quality of the backlinks to a site i.e. links from recognised authority sites that search engines and human visitors trust and value highly. It does NOT mean a high number of low-quality, spammy links bought in from third-parties.

The off-page SEO game usually takes time to play out. It isn’t a quick fix strategy but will reap rewards in terms of page rank further down the line. A website with world-class content will attract links like a magnet. Read more…

ON-PAGE SEO

On-page SEO doesn’t rely on third-party links but on the efforts webmasters put into website content. That means incorporating relevant, original and informative content, powerful keywords, alt text and title tags. I can also mean leveraging social media sites and blogs through submissions and comments.

On-page and off-page SEO should complement one another but it’s important to get your house in order first, by throwing all your resources at building a website that is both human and search engine spider-friendly. Once those solid foundations are established, you can start thinking about your off-page tactics. Read more…

OPENAI

OpenAI is an artificial intelligence research laboratory focused on developing advanced AI technologies for the betterment of society. It aims to create AI systems that are not only capable but also safe and beneficial for humanity. OpenAI conducts groundbreaking research in various areas of AI, including natural language processing, reinforcement learning, and robotics.

Within OpenAI’s array of projects, Chat-GPT stands out as a flagship example of their achievements in natural language processing. Chat-GPT is an AI model trained to understand and generate human-like text, making it adept at engaging in conversation with users. It’s designed to assist with tasks ranging from answering questions and providing information to simulating dialogue and facilitating communication. Chat-GPT represents OpenAI’s commitment to pushing the boundaries of AI capabilities while also ensuring its responsible and ethical deployment in real-world applications.

ORGANIC LINK

Organic links derive from other websites, blogs or social media pages. They are not paid for or elicited in some way but arise when Googlebots or other search engines’ spiders trust them to be natural i.e. they link to your site without being asked to. They are the antithesis of links purchased from link farms and other illicit, black hat sources.

Organic links are a direct result of building high-quality websites that are informative, trustworthy and contain masses of well-written, original content that visitors are keen to read, plus video clips and arresting images. You need to give other sites a compelling business reason to build links to your site. Main advantages of organic links are that they can drive targeted traffic to your site, which the Googlebots then regard as positive ‘votes.’ They are also a powerful means of establishing a reputation in your niche area and becoming an authority site (see Authority Sites).

You can take advantage of 3rd party link-building tools, like the Moz company’s Open Site Explorer, to accurately assess the value of other websites and blog authors, from whom you desire to elicit high-quality link juice. Special metrics allow you to analyse the strength of your own domain’s inbound link profile and compare it with those of your main competitors. It may take several months to build impressive links but it’s well worth a webmaster’s time and effort.

OUTLINK

Outbound links are those that point directly to domains other than your own. Provided a webmaster’s overall SEO work is up to scratch, there is a positive correlation between a webpage’s outgoing links and its PageRank, and the greater the authority of the linked site, the better your site’s ranking is likely to be, provided you are naturally linking to a site or blog with the same theme as your own business. For example, if you run a hairdressing salon in Sydney and link to a popular restaurant in New York City, Google’s algorithms will find you out and potentially impose a ranking penalty.

It’s advisable to focus your link-building efforts on your ‘money pages’ to really get that quality link juice flowing. But remember, from a user experience perspective, it can be detrimental to have too many links on the page, so always opt for quality over quantity. And remember – relevancy is everything!

PAGE EXPERIENCE UPDATE

The Page Experience Update, introduced by Google in June 2021, represents a significant algorithmic shift that emphasizes user-centric metrics in evaluating website performance and rankings. This update places a spotlight on the overall user experience offered by a web page, encompassing various factors that directly impact user interaction and satisfaction. Core Web Vitals, a set of specific metrics related to loading speed, interactivity, and visual stability, became crucial components in assessing a page’s user experience.

Google’s Page Experience Update aims to prioritize web pages that offer a seamless, fast, and engaging experience for users. Factors like page loading speed, responsiveness across devices, the absence of intrusive interstitials or pop-ups, and visual stability during page loading are integral elements evaluated for ranking purposes. Websites that excel in providing a positive user experience, as indicated by these metrics, stand to benefit from improved visibility in search results, enhancing their chances of reaching and retaining users.

For website owners and developers, the Page Experience Update underscores the significance of user-centricity in web design and optimization strategies. Prioritizing factors that contribute to a smooth and enjoyable user experience, such as optimizing site speed, enhancing interactivity, and ensuring visual stability, becomes crucial for maintaining or improving search rankings. By focusing on user-centric metrics outlined in the Core Web Vitals, webmasters can align their efforts with Google’s ranking criteria, ultimately delivering enhanced experiences to users while potentially improving their website’s visibility in search results.

PAGERANK

Put simply, PageRank (PR) is a value assigned by Google to an individual webpage, based on its importance, and is measured on a sliding scale of 0 to 10. A PR of 10 is extraordinarily rare. The PageRank algorithm was originally conceived by one of Google’s two founders and smartest brains – the legendary Larry Page.

Google figures that when one website links to another, it is effectively casting a vote in favour of that site. The higher the authority of the domain casting the vote, the greater the positive impact on the webpage’s search engine rank and the higher its place in SERPs (all other ranking factors being equal). This means that links are an indispensable component of any successful SEO strategy.

Google keeps its PageRank algorithm a closely guarded secret but many experts in the SEO industry suspect it is based on a logarithmic system. This may trigger a blank expression but, for our purposes, it means that it takes a lot more additional PageRank to move up to the next level than it did to achieve the previous one. You can check PR by adding third-party software to your browser. There are many different versions available as extensions.

The level of PageRank tends to increase as the number of pages within a website expands. PageRank is distributed throughout a site’s pages via internal links. For an SEO consultant, it’s important to get the internal link-building right, in order to maximize the site’s PageRank potential. This does NOT mean creating identical or close-to-identical pages, in a vain attempt to fool the Googlebots into voting for them. New page content must always be original or Google could hit you with hefty penalties for spamming.

It’s most beneficial to channel link juice to the important or money pages e.g. hub pages, indexes and directories. Outbound links cause a website to leak PageRank. One way to negate this effect is to ensure the links are reciprocated.

PAGE RANK SCULPTING

PageRank sculpting was a tactic employed in the realm of SEO to manipulate the flow of PageRank within a website by strategically controlling the distribution of internal links. It aimed to concentrate PageRank—Google’s algorithm for ranking web pages—on specific pages deemed more critical or valuable for ranking purposes. This tactic involved using nofollow attributes on internal links to prevent the flow of PageRank to certain pages, thus redirecting more PageRank “juice” to other linked pages.

However, Google’s algorithm evolved over time, and its approach to handling nofollow attributes and PageRank sculpting changed. Google started treating nofollow attributes more as hints rather than directives, meaning that nofollow links didn’t necessarily hoard PageRank as initially believed. Instead, Google allocated PageRank as it saw fit, irrespective of nofollow attributes. This essentially rendered the tactic of PageRank sculpting through nofollow links less effective or impactful in influencing rankings.

As Google’s algorithms became more sophisticated, the emphasis shifted from manipulating PageRank flow through internal linking to prioritizing user-centric factors, content relevance, and overall website quality. The strategy of PageRank sculpting through nofollow attributes lost its prominence as Google’s ranking criteria evolved to prioritize more holistic aspects of user experience and content quality.

PAID FOR INCLUSION (PFI)

A popular alternative for website operators, who simply can’t or won’t wait for their sites to appear in SERPs or online directories, is to make payments to publishers and in return jump to the top of the first page. These schemes are usually known a ‘pay-per-click’ (PPC). Google AdWords, Yahoo Advertising and Bing Ads (the latter two now amalgamated in a single platform) are the main players in this arena. Some social media companies, including Twitter and Facebook, have also adopted a pay-per-click marketing strategy.

The PFI model most commonly works through a bidding system, whereby advertisers bid on keyword phrases relevant to their specific market. The amount they end up paying when a visitor clicks on their advertisement is termed ‘cost-per-click’ (CPC). Company budgets for PPC range from $50 to $500,000 or even more per month.

In most search engines, PPC ads appear either at the top of a webpage’s search results (above the organic listings) or down the right-hand side of the page. They are usually distinguished by a green ‘Ad’ symbol or the word ‘Sponsored’.

PANDA

The Panda algorithm, introduced by Google in 2011, marked a significant milestone in search engine optimization (SEO) history by targeting low-quality and thin content across websites. Panda aimed to improve the quality of search results by penalising websites that offered poor or shallow content, such as duplicate, thin, or low-value pages. It sought to elevate higher-quality and more informative content, ensuring that users were directed to credible and valuable sources when conducting searches.

The Panda algorithm focused on evaluating the overall quality of a website’s content, analysing factors such as uniqueness, relevance, authority, and user engagement. Websites with substantial amounts of duplicate or low-quality content saw a decrease in rankings, while those offering original, in-depth, and valuable content experienced improved visibility in search results. Panda served as a catalyst for website owners and content creators to prioritise high-quality, original, and user-focused content, emphasising the importance of providing value and relevance to users. Read more…

PENGUIN

The Penguin algorithm, introduced by Google in 2012, targeted manipulative link-building practices and spammy link schemes that aimed to artificially inflate a website’s search engine rankings. Penguin focused on penalising websites that engaged in tactics such as buying links, participating in link farms, or using manipulative link schemes to manipulate their backlink profiles. The goal was to improve the quality of search results by promoting websites with natural, organic, and authoritative link profiles while demoting those employing deceptive or spammy practices.

This algorithm update emphasised the importance of high-quality and relevant backlinks, considering them as valuable signals of a website’s authority and credibility. Websites that were found to have unnatural or spammy links pointing to them faced penalties, experiencing drops in search rankings. Penguin incentivized webmasters and SEO practitioners to prioritise ethical and natural link-building strategies, focusing on acquiring genuine, contextually relevant, and authoritative backlinks to enhance their site’s credibility.

PIGEON

The Pigeon update, launched by Google in 2014, aimed to refine local search results by providing more accurate and relevant information to users seeking local businesses or services. This algorithm update significantly influenced the way Google interpreted and displayed local search queries, impacting both Google Maps and the organic search results. Pigeon aimed to improve the proximity and relevance of local search results by tying local search ranking factors more closely to traditional web ranking signals.

The update brought about changes in how local businesses appeared in search results, emphasising factors like location, distance, and relevance to the user’s query. Pigeon aimed to provide more precise and contextually relevant results for location-based searches, ensuring that users received listings that were closer in proximity and more aligned with their search intent. This update also emphasised the importance of local SEO strategies for businesses, encouraging them to optimise their online presence to improve visibility in local search results.

PORTAL

Web portals are sites designed to connect customers, employees, partners and other stakeholders. They collate information from various sources for sharing purposes. They are also frequently used to enable collaboration, project management, application integration, e-commerce or the sharing of business intelligence. They can be designed to operate on PCs, Macs, tablets and smartphones.

Portals are often programmed to present information specific to a user’s needs and accessed via a personal log-in. There are various types, including knowledge portals, public web portals, government portals, market space portals, bidding portals and enterprise web portals.

Online banking sites are good examples of portals.

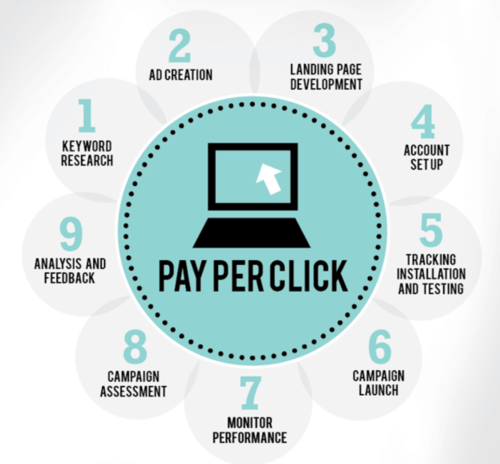

PPC (PAY PER CLICK)

An extremely popular, digital marketing platform, whereby advertisers create an account and pay search engines, like Google and Bing, each time a user clicks on one of their ads (see AdWords). You are paying to rank higher in search results your ads will typically appear above or to the side of the organic listings.

PPC campaigns work using a bidding system i.e. the more you’re prepared to pay, the more you likely you’re to appear in #1 position in the listings. When a surfer clicks on your ad, they will automatically be redirected to a URL of your choice, thereby increasing traffic to that webpage.

If you sell computer hardware and bid $1.50 on the keyphrase ‘computer printer’ and 100 users click on your PPC listing, you’ll be automatically billed $150 by the search engine company. The trick is to do your keyword research and make sure the content of your ad is high-quality.

The main advantages of PPC over traditional SEO strategies are its speed and flexibility. No waiting weeks or even months before your site finally appears in the first pages of the organic rankings. That means you can quickly adjust to market trends and the changing demands of potential customers. It can also be cost-effective, especially if you identify niche, long-tail keywords that your competitors have overlooked and failed to bid on. You could grab yourself a real bargain!

The downside of an effective PPC campaign is that it can be eye-wateringly expensive, especially if you engage in a costly bidding war with your industry’s big-hitters. PPC isn’t a scalable strategy – more traffic inevitably means more financial investment. Smart SEO techniques can often be more effective in the long run and result in more bangs for your buck.

PPA (PAY PER ACTION)

A close cousin of PPC but the search engine operators only get paid when clicks result in conversions i.e. they result in actions from visitors, such as purchasing a product, registering for a service or signing-up for a free trial of some kind.

PPC is a good way to avoid costly click fraud and window-shopping. It also means you are offsetting your costs, because you only pay when you’ve generated either revenue or a high-quality sales lead. But you could still make a loss if those leads fail to convert and the cost the publisher (e.g. Google) is charging for your ad is too great. It’s important to negotiate good PPA rates. Do your homework, talk to your accountant or FD, and have a figure in mind before you start haggling. Then ensure you carefully check all your PPA and PPC reports, then weigh up their ultimate value to your business. Spreadsheets are your best friends!

PROCESS AUTOMATION

Process automation involves leveraging technology to streamline and optimise repetitive tasks, workflows, and operations within an organisation. It encompasses the use of software, tools, and systems to automate manual processes, reducing human intervention and enhancing efficiency. By identifying repetitive tasks prone to human error or time-consuming activities, businesses can implement automation to expedite these processes, allocate resources more effectively, and improve overall productivity.

Automation spans various sectors and functions, including manufacturing, finance, customer service, and marketing. For instance, in manufacturing, robotic process automation (RPA) automated assembly lines, reducing errors and increasing output. In finance, automated invoicing, billing, and accounting systems streamline financial processes, improving accuracy and saving time. Customer service benefits from automated responses to common queries, freeing up human agents to handle more complex issues. In marketing, automated email campaigns, social media scheduling, and data analysis optimise outreach efforts, targeting audiences more effectively.

Process automation not only enhances operational efficiency but also allows employees to focus on higher-value tasks that require human creativity, critical thinking, and problem-solving skills. It enables businesses to scale operations, reduce costs, and remain competitive in an ever-evolving landscape by harnessing technology to streamline workflows and drive innovation.

PROPRIETARY METHOD

This phrase should set alarm bells ringing, as it’s often used in sales pitches by unscrupulous, so-called SEO ‘experts’, who claim they have a unique formula to send your webpages soaring to the top of SERPS. These purveyors of snake oil should be avoided at all costs. There is no magic bullet, just tried and tested SEO strategies. Worst case scenario is that they employ black hat techniques that could see your website vanish from Google forever. Caveat emptor!

PRIVATE BLOG NETWORK (PBN)

PBN’s were outlawed by Google back in 2014, as they were deemed to be black hat SEO techniques used for link-building purposes.

A PBN is a group of blogging sites owned by a single publisher and often hosted by domains like LiveJournal, Tumblr and Blogger. Google gets more sophisticated every day and will even sniff out blog networks containing high-quality content and impose sanctions of varying degrees, including de-indexing. Specific practices that incur Google’s wrath include the following:

- Posting low-quality blog content

- Selling links from your PBN

- Using the same IP address to host more than a single blog

- Hosting your blogs on flagged IP addresses

- Using penalised domains to post your blogs

- Restricting web crawler access

- Using similar anchor text for links from your network

QUERY

Refers to text entered into a search engine, in order to produce a result.

QUILLBOT

QuillBot is an advanced paraphrasing and rewriting tool powered by artificial intelligence, developed to assist users in generating high-quality written content efficiently. Launched in 2017, QuillBot employs machine learning algorithms to understand input text and produce rewritten versions that maintain the original meaning while enhancing clarity, coherence, and fluency. Its user-friendly interface and intuitive features enable writers, students, and professionals to improve their writing by effortlessly generating paraphrased sentences, summaries, and rephrased text. With additional functionalities like grammar checking and word suggestions, QuillBot serves as a valuable writing assistant for individuals seeking to enhance their productivity and refine their written communication skills. As an accessible and versatile AI-driven writing tool, QuillBot empowers users to create polished and engaging content across various domains, from academic papers to business documents and beyond.

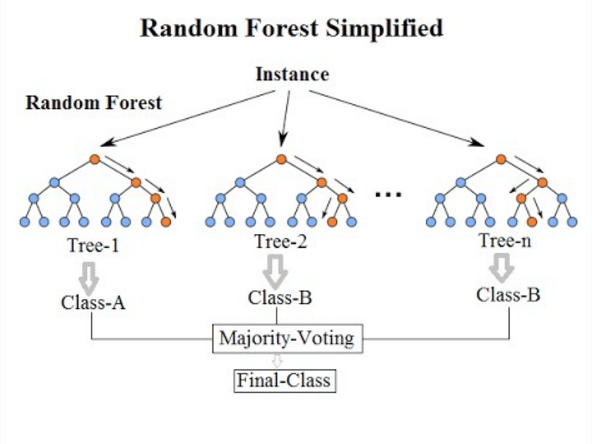

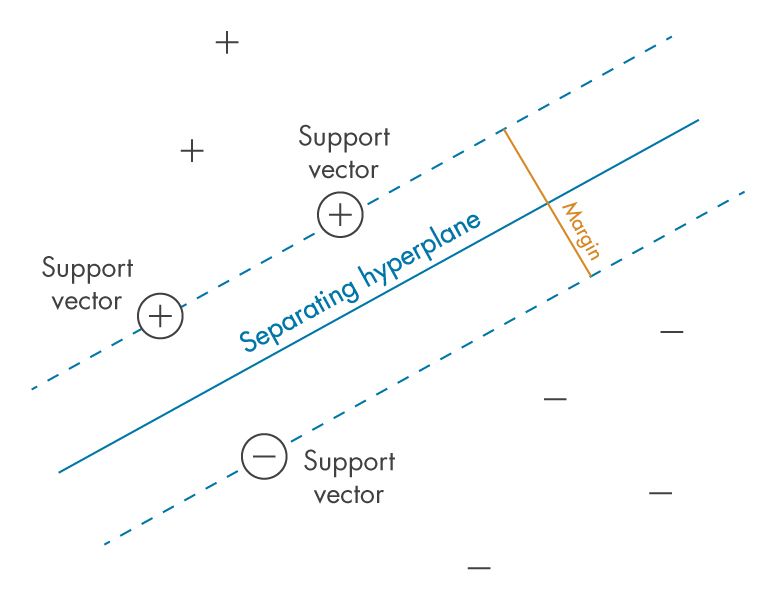

RANDOM FOREST

Random Forests are a powerful machine learning technique used for both classification and regression tasks. They are an ensemble learning method that builds multiple decision trees and combines their predictions to improve accuracy and robustness. Each tree in the Random Forest is trained on a random subset of the data and a random subset of features, resulting in a diverse set of trees that collectively make more accurate predictions than any individual tree. Random Forests are known for their ability to handle high-dimensional data, noisy data, and large datasets effectively. They are widely used in various domains, including finance, healthcare, marketing, and ecology, for tasks such as fraud detection, customer segmentation, and disease diagnosis, owing to their simplicity, interpretability, and ability to handle complex datasets.

RANK BRAIN

RankBrain stands as a pivotal component of Google’s search algorithm, leveraging artificial intelligence and machine learning to interpret and understand user search queries better. Introduced in 2015, RankBrain revolutionized how Google processes and ranks search results by using AI to comprehend the meaning behind complex and ambiguous search queries. It focuses on understanding the context and intent behind user searches, especially those with long-tail or conversational phrases, rather than solely relying on keyword matching.

This AI-driven system learns from vast amounts of search data to interpret and predict the most relevant results for queries that it hasn’t encountered before. RankBrain doesn’t replace Google’s search algorithm but acts as a supporting factor, helping to refine and improve search results by considering various factors beyond keywords. By understanding user intent, context, and search behavior, RankBrain assists in delivering more accurate and personalized search results, improving the overall search experience for users seeking information across a wide spectrum of topics and queries.

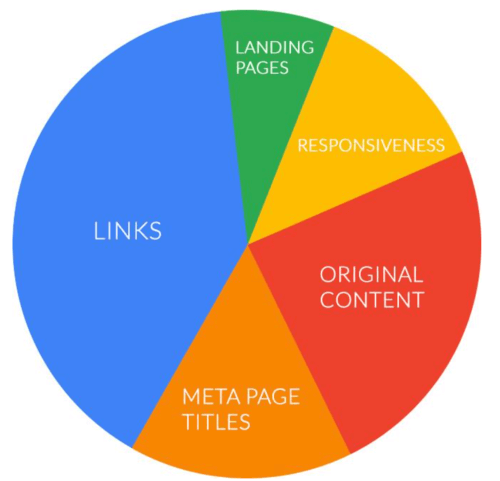

RANKING FACTOR

The factors taken into consideration when a search engine, such as Google or Bing, to determine where a page ranks in its SERPS.

While many factors are common knowledge, publishers are constantly moving the goalposts by adding new ones or changing the emphasis on their existing factors. Just like sharks, SEO specialists can never relax and stand still, they need to be constantly assessing their strategies and be on the look-out for algorithm changes. The ‘Big 3’ ranking factors that all SEO consultants should either know or be shot on sight are:

- Inbound and outbound links – must be high quality and natural

- Meta tags, meta keywords and meta descriptions

- The quality of page content – content is king!

But we live in a rapidly changing cyberspace and in 2016 the dominance of mobile phones led to Google switching to ‘mobile-first indexing’. This means that Googlebots will now primarily crawl and index mobile rather than desktop versions of websites. From an SEO perspective, mobile-friendliness is not just preferable but essential. Whatever is available on a desktop or tablet must now appear in mobile searches, too.

Page loading speed is another important ranking factor, with the optimum being 3 seconds for desktops and 2 seconds for mobiles. Webmasters must work hard until they get this right, as slow loading is also frustrating for the end user and few will have the patience to stick around.

Google confirmed back in 2014 that encryption is now a ranking signal, meaning a strongly encrypted HTTPS site will often rank higher than a standard HTTP version. As online security and fraud is of increased concern, it’s highly likely that Google and other search engines will significantly increase the weight of this factor.

Another technical tweak a web designer should try is to add H1 and H2 headings to their landing page source code. A strong correlation has been found between this and higher ranking.

RECIPROCAL LINK

Reciprocal linking occurs when two sites link to one other for their mutual ranking benefit. Once popular, due to their low cost, they are now viewed with suspicion by Google, which sees them as what they really are i.e. cheap, black hat attempts to manipulate SERPS rather than genuine votes for good content.

Some webmasters are concerned that reciprocal links will incur ranking penalties, however, it’s far more likely that the links will be devalued, thus rendering them rather pointless. From another standpoint, you might argue that lower SEO value is better than no value at all. Equally, there is also potential referral value in such links, and you may derive some traffic when searchers come across them. Again, better a small trickle than none at all.

If you must build reciprocal links, make sure they are relevant. If you run a taxi company in Darlinghurst, for example, don’t link to a hairdresser in Cronulla (or anywhere else!). It’s also not a great idea to link to your direct competitors. If you’re a dentist, try linking to toothpaste companies instead of other dental surgeries.

Lots of disparate backlinks to authority sites makes it tough for web crawlers to figure out what your business actually does. Keep on point and avoid linking to spammy sites or link farms. At best, this is grey hat SEO.

RECOMMENDATION ENGINE

Recommendation engines are powerful tools used in various industries to personalize content and make suggestions based on user preferences and behavior. These engines leverage data analysis and machine learning algorithms to predict and recommend items that users are likely to be interested in. In e-commerce, recommendation engines analyze past purchases, browsing history, and demographic information to suggest products or services that match a user’s interests. Similarly, in streaming services, recommendation engines analyze viewing history and user ratings to suggest movies, TV shows, or music. Content-based recommendation engines rely on the characteristics of items themselves, while collaborative filtering engines make recommendations based on user behavior and preferences, often using techniques like matrix factorization. Hybrid recommendation engines combine both approaches to provide more accurate and diverse recommendations. Overall, recommendation engines play a crucial role in enhancing user experience, increasing engagement, and driving revenue for businesses in today’s digital landscape.

REDIRECT

There are many reasons and several methods of redirecting traffic to a different URL, without losing that precious link juice (see 301 DIRECT and 302 DIRECT).

REFERRER

The source of a website visitor. When visiting a webpage, the referrer is the URL of the previous page visited. Some SEO’s like to track their referrers using web log analysis software, although some web browsers block this practice, in order to protect user privacy.

REGIONAL LONG TAIL (RLT)

When you search for a local service, Google’s algorithm is programmed to recognise your regional IP address and deliver local results. If you’re located in Surry Hills and search for a restaurant, the top results will reveal a list of local eateries. However, if you’re in Wollongong and perform that search, you will need to add the long tail keyphrase, “Surry Hills restaurants”, to guarantee the specific results you need. You need to bear this in mind when planning your SEO strategy.

REGISTRAR

A commercial company or organization that facilitates the registrations of internet domain names. Such registrars must be accredited by a gTLD (generic top level domain) or ccTLD (country-code top level domain).

There is a huge choice of competing companies you can register your name with, all offering hosting packages of various types and costs. Many have expanded to offer email services, online marketing, e-commerce and even website creation. Popular international providers include Go Daddy, TuCows, Register.com, NameCheap and Network Solutions.

REINCLUSION

The most severe penalty a website can suffer is to be permanently or temporarily de-indexed by Google, as a result of one or more contraventions of the company’s draconian codes of practice, officially known as quality guidelines. These include (but aren’t limited to) buying in links, cloaking, hiding text, stacking titles, duplicating content and duplicating sites. De-indexing could also be the result of third-party legal action that obliges Google to shut you down.

So, what can you do about it? Firstly, prevention is better than cure, so you should avoid black hat techniques at all costs. The Webmaster Guidelines are there for a reason and ignorance is no defence! But if you wake up to find your site has disappeared into the ether overnight, you need to access Google’s Search Console to find out why. You will then have to make the necessary changes to your website (often a long and painful process) and submit a Reconsideration Request by again signing into Search Console. The Manual Actions section will list your misdemeanours and you can then request a review. This process usually takes a few days.

Rejection of your request will mean going back to see what you missed the first time. Unlike us mere mortals, Google’s all-seeing eye doesn’t miss a trick! We make no bones about it, de-indexing is a webmaster’s nightmare and often involves setting up a spreadsheet to list all your backlinks, then figure out which are the offenders. You’ll also need to list anchor text, link contact details, no follow and removal requests, and link status. In short, cancel any plans you may have made!

REINFORCEMENT LEARNING

Reinforcement Learning is a type of machine learning where an agent learns to make decisions by interacting with an environment. The agent receives feedback in the form of rewards or penalties based on its actions, allowing it to learn which actions lead to better outcomes over time. Through trial and error, the agent discovers optimal strategies for maximizing cumulative rewards. This approach is inspired by how humans and animals learn from experience. Reinforcement Learning has applications in various fields, from training autonomous robots to playing complex games like chess and Go, and it holds promise for solving real-world problems where traditional algorithms may struggle.

RELEVANCE

In this case, relevance is the extent to which the content of a webpage corresponds to the original search term typed by a user. This is a major search engine ranking signal and crucial to a positive user experience. All the high-quality links in the world won’t compensate for irrelevant content.

This is where classic, on-page SEO comes into its own. Well-written original and informative content should be supplemented with strong title tags and meta descriptions. Don’t forget, Google ranks pages not websites, so your keywords should be chosen to reflect the specific content of each and every page. If you sell car accessories, for instance, your windscreen-wiper page needs to be optimised for that search term, not generic car parts.

REPEAT VISITS

Website visitors who have visited in the past. Many SEO experts believe that Google’s algorithms favour sites that enjoy regular repeat visits and reward them with higher ranking. Frequent visits suggest that a page has contains valuable information and is trusted by users. The website is then deemed to have authority.

REPLIKA

Replika is an AI-powered chatbot developed by Luka, Inc., designed to simulate conversation and offer companionship through text-based interactions. Launched in 2017, Replika utilizes natural language processing and machine learning algorithms to learn from user input and adapt its responses to simulate human-like conversations. Users can engage with their Replika in a wide range of topics, from casual chitchat to deep personal discussions, helping users to alleviate loneliness, reduce stress, and improve emotional well-being. Additionally, Replika offers features such as goal-setting, journaling, and mood tracking, providing users with personalized support and encouragement. With millions of users worldwide, Replika has garnered popularity as a virtual companion and self-improvement tool, demonstrating the potential of AI to enhance mental health and social connections.

ROBOTS.txt

The robots.txt file is used by web developers to restrict web crawler access to specific areas of a website. Its uses the robots exclusion protocol/standard, to inform the spiders not to process or scan those areas, via an ‘allow’ or ‘disallow’ command (see NOFOLLOW). Most common search engines have adopted the standard, including Google, Yahoo!, Bing, Baidu, Ask, DuckDuckGo, AOL and Yandex. Bear in mind that robots.txt is purely advisory and some webcrawlers will choose not to comply with it.

The file may also contain further webcrawler directives. The contents of the file are always publicly available, meaning others will be able to see which pages you don’t want to be crawled. So, avoid using robot.txt to conceal private or sensitive information.

RSS FEED (Really Simple Syndication)

RSS feeds, to have or have not? Because these feeds are syndicated sources of news and information and not unique to your website, they won’t add great weight to your SEO campaign. Despite this, they often bring with them the benefit of increased site traffic and frequently updated content, which attracts attention and keeps visitors on your site for longer (a ranking factor). And the more subscribers to the feed the better.

With RSS feeds, the usual SEO rule applies– make it relevant! An off-topic feed will be detected by Google and treated in the same way as an irrelevant backlink i.e. it will be devalued and carry less weight than a natural link. A relevant feed will improve the user experience, which is always a good thing in the eyes of Google and its competitors.

SAFARI

Safari is a web browser developed by the Apple company and first released in 2003 and is the default browser on all Apple products. A Windows version ran was available until 2012, when it was discontinued. Safari 10 is the current version.

SALESFORCE EINSTEIN

Salesforce Einstein is an artificial intelligence platform embedded within the Salesforce Customer Relationship Management (CRM) system, designed to enhance sales, marketing, and customer service operations. Introduced in 2016, Salesforce Einstein leverages machine learning, natural language processing, and predictive analytics to provide valuable insights and automation capabilities to users. Its features include predictive lead scoring to identify high-potential sales leads, intelligent email and chatbot responses for customer service interactions, and personalized product recommendations for marketing campaigns. By enabling businesses to make data-driven decisions and streamline processes, Salesforce Einstein empowers organizations to deliver better customer experiences and drive revenue growth. Additionally, its integration with the broader Salesforce ecosystem allows for seamless deployment and customization, making AI accessible to businesses of all sizes.

SANDBOX

The alleged Google sandbox is thought by many in the SEO business to be a probationary period that all new websites must endure. This means that all your on and off-page SEO efforts could mean zilch in the short-term. It’s a bit like driving a car with the handbrake on but it’s ranking not speed that’s diminished.

It’s suspected that Google introduced the sandbox in March 2004, to reverse the tide of spammy websites using purchased links to rapidly ascend its rankings, straight from launch. However, many believe that the fabled sandbox is a figment of paranoid webmasters’ imaginations and its effect merely a consequence of existing algorithms. Sites consigned to the sandbox for longest (up to 6 months in some extreme cases) tend to be those targeting competitive keywords, whereas those that don’t tend to escape relatively quickly. The dampening effect will gradually peter out over time. If you have strong PageRank and high-quality links but still don’t figure in searches, you’re probably in the sandbox.

Frustratingly, even registering with AdSense or AdWords won’t provide an escape from the sandbox – you just have to knuckle down and serve your time! But a webmaster should use it constructively, by continuing to optimise his or her site with world-class content and high-quality links, so when probation is over, the site will rise like a hot-air balloon.

SCHEMA MARKUP

Schema markup is a structured data vocabulary that helps search engines understand the content on web pages more effectively. It provides a standardised way to add additional context and meaning to the content, allowing search engines to display rich snippets or enhanced results in search engine results pages (SERPs). This markup language uses a set of predefined tags or microdata to describe different types of information, such as events, reviews, products, organisations, and more.

Implementing schema markup offers several benefits. Firstly, it enhances the visibility and appearance of search results by enabling rich snippets, which can include additional information like ratings, prices, dates, and images. These enhanced snippets make search results more engaging and informative, potentially increasing click-through rates. Secondly, schema markup helps search engines better understand the content and context of a web page, which can positively impact rankings by improving relevance and providing clearer information to search engine crawlers.

By incorporating schema markup into their websites, businesses can enhance their online visibility, improve the presentation of search results, and potentially gain an edge over competitors by providing more informative and appealing snippets in search engine listings. It’s a valuable tool for optimising content for search engines and enhancing the user experience by providing more detailed and relevant information directly in search results.

SCRAPE

Scraping is the unauthorised, black hat practice of copying content from popular websites and publishing it on your own site, as if it was original. It’s one of the tasks of Google’s Panda algorithm update to seek out duplicate content and punish it with sanctions of varying degrees. Scraping is usually done using automated bots (small programs). Avoid this form of plagiarism at all costs and focus on using only original content.

SEARCH ENGINE

The dictionary definition of a search engine is: “A program that searches for items in a database that correspond with keywords inputted by the user. Used especially for finding internet sites”. Obviously, we’re talking here about the likes of Bing, Yahoo! Ask, AOL, Baidu and the search engine that’s now so ubiquitous that it’s entered the English language as a much-used verb i.e. Google.

For SEO purposes, it’s important to know how search engines work. They have two primary functions – crawling the worldwide web to compile an index and presenting users with lists in the form of pages of websites they determine to be the most relevant. They do this by using programs known variously as spiders, Googlebots or webcrawlers, to follow paths known as links. When they encounter a webpage, file or image, they decipher their code and store it in huge databases, which are held on thousands of computers in secure data facilities all over the world. When a user enters a search term, the search engine scans its billions of pages of data to provide a relevant answer to the query. In addition, it ranks the results according t

The most popular search engines with approximate numbers of unique monthly visitors are as follows:

Google 1.8 billion

Bing 450 million

Yahoo! 325 million

Ask 260 million

AOL 135 million

On average, every Google query must travel 1,500 miles to a data centre and back. This gigantic search engine currently processes 40,000 queries every second or 1.2 trillion per year! Not bad for a company only founded in 1998.

Without search engines, there would be no SEO and this glossary wouldn’t exist! It’s important to note that websites should be optimised for people first and websites second – that means the user experience must be prioritised.

SEARCH ENGINE MARKETING (SEM)

Often shortened to “search marketing” this term refers to the promotion of a website in search engines in order to increase visitor traffic, typically by employing a mix of pay per click advertising campaigns and paid inclusion, as opposed to unpaid SEO techniques.

Google AdWords, Yahoo Search Ads and Bing Ads are the most popular marketing platforms for SEM. All have great tutorials that will help you plan your marketing strategy. Read More…

SEARCH ENGINE OPTIMISATION (SEO)

The science of increasing visitor traffic to a website by achieving the highest possible rank on a search engine’s results pages. Page #1 in Google is the SEO holy grail, because it’s by far the biggest search engine on the planet.

Your SEO techniques must comply with Google’s quality guidelines or you could risk incurring a ranking penalty or even see your site de-indexed and disappear from the listings for months or even permanently, depending on the gravity of your indiscretion. Google is notoriously omniscient and unforgiving! The three primary pillars of SEO are: Powerful Keywords, High-Quality Backlinks and Great Content. Every strategy must achieve the right balance of these factors to be successful in the long-term. Beyond these three, there are literally thousands of other ranking signals used by Google and other search engines, and they are constantly being tweaked and adjusted. No one said SEO is easy!

Reaching any search engine’s first page is a huge challenge and one that only the finest SEO specialists (like SEO North Sydney!) can rise to. It takes experience, in-depth knowledge, tried and tested techniques, organization and fierce determination, to have a realistic hope of taking a shot at the title. Read More…

SEARCH ENGINE SPAM

Spamming is a deliberate attempt to manipulate search engines into returning irrelevant, redundant or poor-quality results, in order to promote a website or particular webpage. This practice usually shows little consideration for the needs of users.

Black hat web developers have a whole range of crafty techniques in their spam toolbox, although Google and other search engines are now wise to most of them and quick to impose penalties on those that transgress its quality guidelines. Below is a list of common spamming methods:

- Irrelevant keywords

- Keyword stuffing

- Keyword stacking

- Duplicate or scraped content

- Redirects

- Link farms

- Doorway pages

- Cloaking

- Hidden or minute text

- Domain spam

- Hidden backlinks

- Page swapping

If you come across a spammy website, it’s easy to report it to the various search engine admins, who are keen to resist any attempts to subvert their ranking algorithms. .

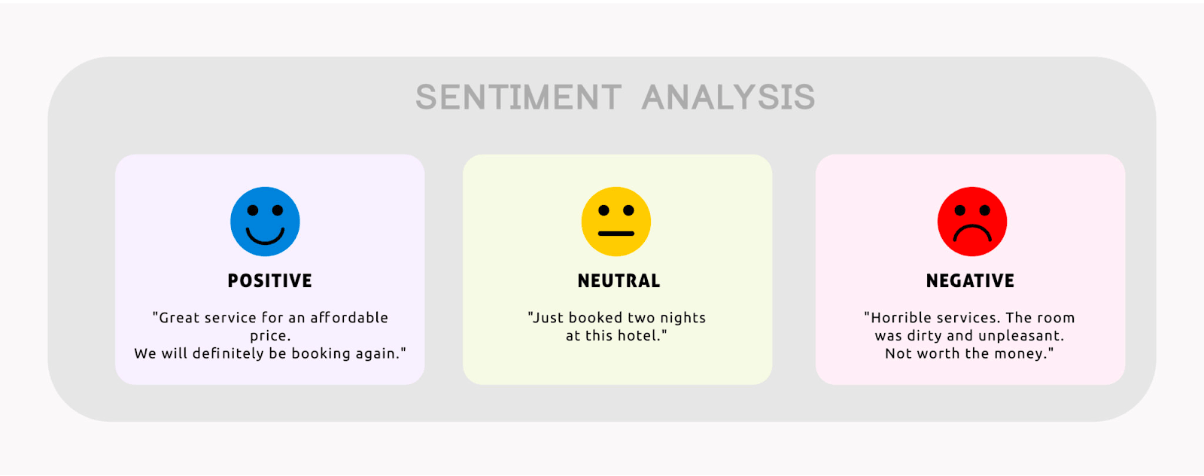

SENTIMENT ANALYSIS

Sentiment analysis, also known as opinion mining, refers to the process of using natural language processing, machine learning, and computational linguistics to analyse and determine the sentiment expressed in text data. It involves identifying, extracting, and understanding subjective information from text, gauging whether the expressed sentiment is positive, negative, or neutral. The primary goal of sentiment analysis is to assess the emotional tone, opinions, attitudes, or feelings conveyed within text data, whether it’s from social media posts, customer reviews, surveys, or any other form of textual content.

This analytical technique has diverse applications across various industries. Businesses often use sentiment analysis to gain insights into customer opinions and perceptions about their products, services, or brand. By analysing sentiments expressed in customer feedback, reviews, or social media mentions, companies can understand customer satisfaction levels, identify trends, and make data-driven decisions to improve products or services. Moreover, sentiment analysis aids in monitoring public opinion, political discourse, market trends, and even in detecting potential issues or crises that require immediate attention, offering valuable insights for decision-making and strategy development.

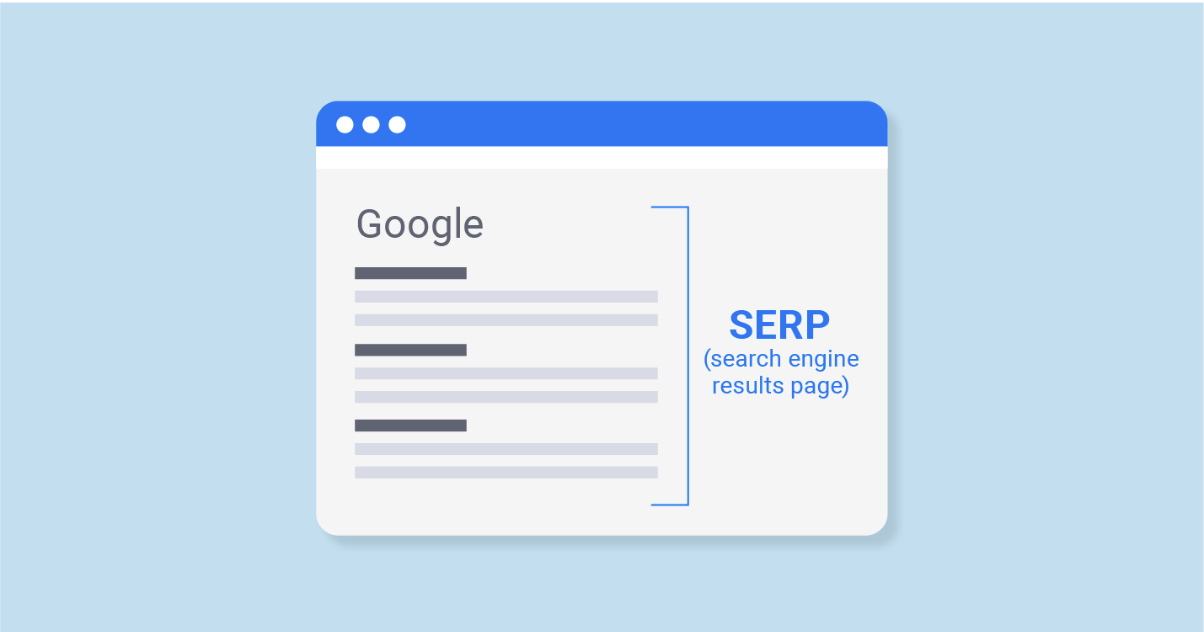

SERP

Originally, SERP was short for ‘Search Engine Results Page.’ Meaning that if you’re on the first of Google this is SERP #1. If you’re on page 2, you’re SERP #2. However over time this acronym has evolved to mean ‘Search Engine Ranking Position.’ So if you’re first in Google’’s organic search you’re SERP #1. If you’re 2nd in Google you’re SERP #2. And if you’re halfway down page 2 you’re SERP #15 (as there are usually 10 organic listings per search page), etc.

The organic listings are displayed in response to a user entering a keyword query into an engine’s search box. The results can be natural (organic) or in the form of sponsored advertisements (pay per click marketing campaigns). Paid ads tend to be displayed at the top of the page to make them clearly distinguishable from the organic listings (and to make Google more money!). Depending on the search term used, a single enquiry may yield anything from zero pages to millions. From an SEO perspective, page 1 is the target, as few surfers drill further into the SERPs, with 92% of people not going to page 2 of their search. And 61.5% of all organic search is in the top 3 on page 1.

Natural results are determined by the search engine algorithms and usually ranked in terms of relevance, whereas paid ads achieve their rank through a keyword bidding auction. In Google, organic results can have a keyword or location bias, presenting companies and services in the area where the searcher is based, unless a specific city, town or other geographical parameter is specified in the enquiry.

SILOING

Siloing, in the context of website architecture and SEO, refers to the organizational structure of content within a website where related pages are grouped into thematic clusters or silos based on their topic or relevance. The goal of siloing is to create a hierarchical structure that organizes content in a logical and interconnected manner, enhancing both user experience and search engine visibility. Siloing involves grouping related content into distinct sections or categories, ensuring that each silo focuses on a specific theme or topic, and the content within a silo is closely related and internally linked.