The Digital Marketing Bible (A-K)

Everything You Ever Wanted to Know About Digital Marketing (But Were Afraid to Ask!)

Let’s not beat around the bush, digital marketing is complicated! Yes, sure, it’s easy to understand at first blush, but when it comes to making it work for you, it proves oh-so-hard to implement. How many times has your SEO company obfuscated with technobabble about topic A or topic B, only to leave you more confused at the end of the call than at the beginning of it!

Well, luckily for you, we here at SEO North Sydney & Web Design appreciate that a business owner’s time is VALUABLE. Which is why we’ve created the Digital Marketing Bible for you. Think of it as a lexicon. An A-Z of EVERYTHING related to the digital marketing industry. All the helpful content presented is factually correct and 100% accurate. Plus, answers are deliberately kept short, sharp, and to the point. Because you’re a busy person with a business to run, and you just want the FACTS, not the technobabble.

So, if you’ve got a question about something to do with digital marketing, simply type the topic into the search box below (ie. ‘AdWords,’ or ‘CHATGPT’ or ‘Schema,’ etc.), and you will be instantly transported to the answer you need. Alternatively, you can simply scroll down the page and devour all the information presented like a savant at an all you can eat knowledge buffet! From ‘A.I’ to ‘Zesty.io’ and every topic in-between, the Digital Marketing Bible is your one-stop-shop for everything you ever wanted to know about digital marketing but were afraid to ask!

301 REDIRECT

Stifle that yawn, because 301 redirect is an indispensable weapon in your SEO arsenal! There are many reasons why you may want to move a web page, perhaps to a totally different website or elsewhere within the existing one, but the last thing you want is to waste all the ranking benefits or ‘link juice’ the original page has accrued over time. This is where 301 redirect comes riding to the rescue, by immediately letting search engines know that your content has permanently moved. It will then automatically point all visitor traffic to a new URL of your choice.

301 direct takes over 90% of your link juice – old backlinks, site visits and page rank – and keeps it flowing to the new location, even if that location has an entirely different domain name. Clever, eh? How it does this is best left to us geeks but, trust us, 301 direct is a godsend. The alternative scenario would be all that precious juice going down the drain and your visitors receiving a ‘404-error’ message, which is both frustrating for them and bad business for you.

302 REDIRECT

If you need to move a web page or even a whole site to a new URL for a temporary period, perhaps so it can be redesigned, you need to use 302 redirect. Because 302 directs are technically a lot quicker and easier to set up than 301 redirects, webmasters often lazily opt for them instead. Some also believe it’s a crafty way to get around search engines’ built-in aging delay (the months it generally takes for Google and others to list a new site for competitive keywords). This is bad SEO practice and will inevitably lead to problems further down the line. A 302 redirect tells search engines to keep the original page or domain indexed, while pointing traffic to the temporary URL, but should only be used under the right circumstances.

404 NOT FOUND

Houston, we have a problem! We’ve all of us landed expectantly on a web page, only to be frustrated by a ‘404 Page Not Found’ error message. This response code is automatically generated by web servers using HTTPS protocol and indicates that the ‘client’ i.e. your browser, has managed to contact an external server but that server has failed to retrieve the page you were searching for. This commonly occurs when a URL has moved or been deleted and an indolent webmaster has failed to employ 301 or 302 redirects.

Most 404 errors are client-side and usually the result of a wrongly typed URL, but for SEO purposes it’s important that you investigate any reports of 404s that you receive, just in case it’s an issue with your site’s back end.

ADEPT

Adept is an AI model developed by OpenAI, introduced in 2022, designed to excel in a wide range of language understanding tasks with a focus on long-form text comprehension and reasoning. It represents a significant advancement in natural language processing, building upon the success of previous models like GPT (Generative Pre-trained Transformer). Adept incorporates novel architectural innovations and training techniques to improve its ability to understand and generate coherent, contextually relevant text over longer passages. By integrating information from diverse sources and exhibiting strong reasoning capabilities, Adept demonstrates remarkable proficiency in tasks such as answering complex questions, summarization, and document understanding. Its robust performance across various benchmarks underscores its potential for practical applications in fields such as information retrieval, education, and content generation, furthering the capabilities of AI-powered language understanding systems.

AdWORDS

AdWords is a vast, digital advertising platform operated by search engine behemoth, Google. With well over 60% of all online searches happening via Google, the power of AdWords can’t be ignored, even if your site already ranks No.1 in organic search lists.

AdWords advertisements appear either above or to the side of Google’s organic search lists and are distinguished from organic results by a tiny ‘Ad’ label. Google makes part of its vast fortune by charging you, the advertiser, each time a surfer clicks on one of these ads, hence the term ‘pay-per-click’ (PPC).

AdWords works in a similar way to an auction – the more you’re prepared to pay in relation to other bidders, the higher your ad is likely to appear on the page. But (there’s always a but in our crazy cyber-world) Google isn’t just interested in your money, it also wants to provide searchers with a great experience. This means each ad is automatically assigned a quality score, based primarily on its relevance. For example: say you want to buy a hippo online and you click on a sponsored hippo ad that redirects you to the homepage of MassivePets.com, instead of a page specifically selling hippos. You wouldn’t be happy and neither would Google, who would consider it lazy and mark the offending ad down, even if the owner was paying more than a rival bidder.

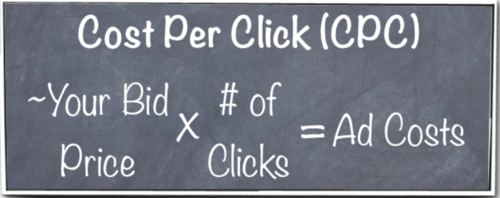

To squeeze the most juice out of an AdWords campaign you need to work out exactly how much you can afford to pay – known as ‘cost-per-click’ (CPC) – then ensure your ad features the right keywords and links to a relevant URL. Research is vital, if you don’t want to blow your marketing budget on wasted clicks.

An alternative to CPC is ‘cost-per-impression’ (CPM) which (somewhat confusingly) means ‘cost-per-thousand’. In this scenario, you set the amount you want to pay Google for each set of one thousand views your ad generates.

AdSENSE

AdSense is (in a sense) the reverse of AdWords, in that it’s the website owner who gets paid by Google when visitors click on ads within their site – hurrah, we hear you cheer! Practically anyone over 18 years of age with opposable thumbs and a G-mail account can also set up an AdSense account and immediately begin monetizing their website, blog or YouTube channel. It’s as easy as completing an online form, copy’n’pasting some JavaScript code to the back end of your site, or making some quick changes via your domain host.

AdSense content can be simple text, images or video and, just like AdWords, generates revenue on a pre-agreed, pay-per-click (CPC) or pay-per-impression (CPM) basis. N.B. – Google only pays commission after your site has generated a minimum of $100 in revenue.

AdSENSE SITES– MFA’s

Third party MFA (Made for AdSense) sites are frowned upon by Google, leading them to expend a great deal of resources on seek-and-destroy missions. MFA’s often consist of poorly written ‘splogs’ (spam blogs) stuffed with keywords, or pages crammed with adverts designed to generate income for the site owners each time visitors click on them.

ALGORITHMS

Simply put, an algorithm is a series of steps required to complete a given task. For instance; if you’re making a cheese sandwich the algorithm would include slicing some bread, buttering it and putting the cheese in. Similarly, in the world of computer science, an algorithm is a series of steps or rules by which a computer program accomplishes a given task. So far, so good? Still thinking about that sandwich, aren’t you?!

Now let’s move on to the algorithms that concern us here i.e. Search Engine Algorithms. It’s an uninspiring term, so Google saw fit to jazz it up by giving its algorithm updates names like Hummingbird, Penguin and Panda – no, we don’t understand either! The trick for any SEO specialists worth their salt, is to understand how these algorithmic formulae work and incorporate this knowledge into websites, so that they climb relentlessly to the top of search engines and remain there.

So, what exactly do these magic formulae comprise of? Well – we’re not telling you. Or, to be precise, the nerds running the search engines prefer to regard them as their secret sauces. Plus, algorithms vary with each search engine, so Google’s will be different to Bing’s and theirs will be different to Yahoo’s and so on. However, there are certain things all these algorithms have in common.

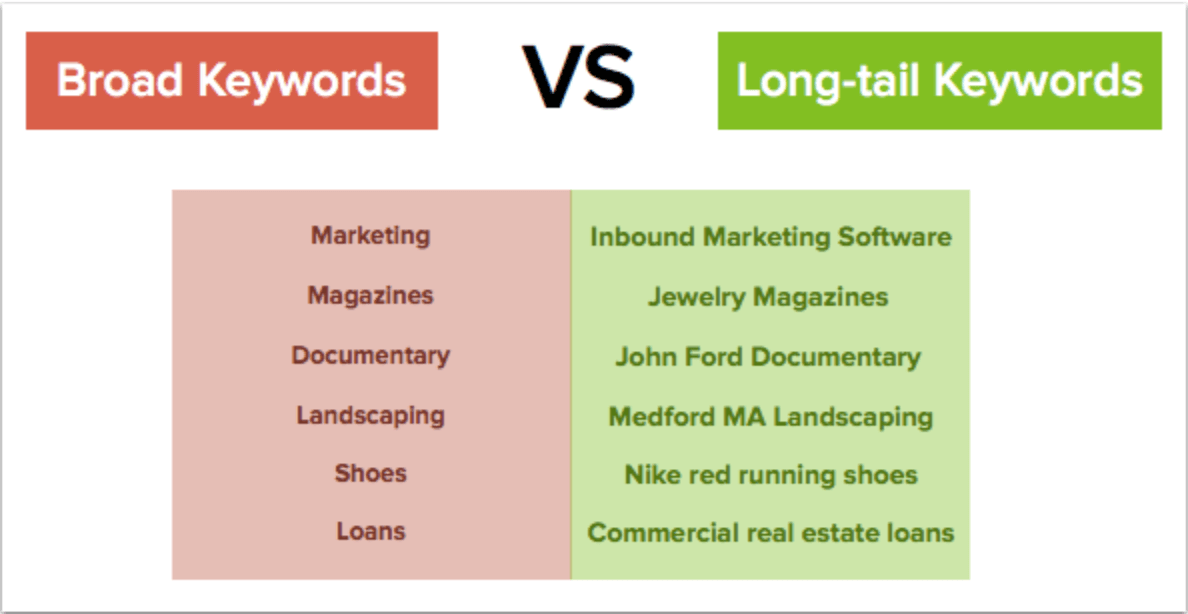

Content Relevance – If you ask the average Luddite what they know of SEO, their eyes will glaze over. However, if you nudge them, they may eventually admit to hearing of keywords. These are what the search engine spiders look for when crawling around the web i.e. relevancy. This remains one of the most crucial ranking factors. A top SEO guru will be adept at achieving optimum keyword density, without stepping over the line and incurring penalties for ‘keyword stuffing’. Google and its competitors love to see keywords in page titles and introductory text.

Individual Factors – As mentioned, algorithms differ between search engines and it’s important to remember this when planning your Earth-conquering SEO strategy. These differences are why results for the same search query vary between search engines. It’s also worth knowing that some penalise for ‘spamming’ (nefariously manipulating search results) while others don’t.

Off-page Factors – While on-page SEO techniques (e.g. keyword optimisation) are indispensable, off-page techniques are an equally important part of this complex recipe. Fundamental to your off-page strategy are the number and quality of inbound links to your website. But there are many other factors, including social media connections, blogging and link baiting.

AFFILIATE WEBSITES

Affiliate websites are 3rd party platforms, built by individuals to market the goods or services of other businesses. There are many upsides to running one of these sites, including low start-up costs, flexible hours, minimal risk and the freedom to run your operation from anywhere with a decent internet connection. You don’t need to invest in stock and neither do you need expensive premises in the real world. What you will need is plenty of time, great organisational skills and unwavering commitment. It’s not simply a case of ‘build it and they will come’, you must put in that hard graft, so don’t start leafing through those Sunseeker yacht brochures quite yet!

Affiliate marketers (also known as publishers) make their money from the commissions paid by merchants when site visitors click on ads that lead potential purchasers directly to their own sites. For a webmaster, the skill is in creating an attractive website with world-class SEO attributes that doesn’t resemble an ugly riot of banner ads and spam text that will incur the wrath of the omniscient and omnipotent Google-beast. If you’re in for the long-haul, you can build a profitable business and enjoy the buzz of commissions rolling in when you’re either asleep, in the pub or getting jiggy with it. The scale of these commissions varies from merchant to merchant and the range is anything between 1% and 75% of the sale price. The trick is to create a site that attracts well-heeled visitors looking to spend money on big ticket items that pay large commissions. It will also be rewarding to build as many sites as you can effectively manage, to spread your net as wide as possible.

Keeping abreast of sales can be challenging, especially if you have dozens of sites, so you may decide to take advantage of affiliate syndicates, such as Shareasale.com or Linkshare.com, who will track your accounts and recover your commission for a small fee.

A.I

Artificial Intelligence (AI) has emerged as the cornerstone of modern technological advancements, reshaping industries and revolutionising the way we interact with technology. At its core, AI refers to machines simulating human intelligence processes, from learning and reasoning to problem-solving. This groundbreaking technology has permeated diverse fields, from healthcare and finance to transportation and entertainment. Its applications are far-reaching, introducing automated systems that analyse vast amounts of data in milliseconds, enabling predictive insights and informed decision-making.

One of the most profound impacts of AI is its ability to streamline operations across various sectors. For instance, in healthcare, AI-powered diagnostic tools enhance accuracy and speed in identifying diseases, potentially revolutionising patient care. In the realm of finance, predictive algorithms aid in investment strategies, mitigating risks and maximising returns. Moreover, the integration of AI in autonomous vehicles holds the promise of safer transportation systems, reducing accidents and optimising travel efficiency. However, the advancement of AI also raises ethical and societal concerns, prompting discussions on data privacy, job displacement, and the ethical implications of autonomous decision-making by machines. As AI continues to evolve, the balance between innovation and responsible implementation becomes increasingly crucial in shaping our future.

ALT TEXT

Alt text (alternative text) is a short description, read only by computers, that describes a website image. It is also valuable for informing visually impaired surfers, using screen-readers, what’s on the page.

So, what does alt text mean for your SEO strategy? It’s extremely important to make images SEO-friendly and the best way to do this is by optimising alt text descriptions, but without keyword-stuffing. Although the user won’t see this text, Google’s all-seeing spiders will seek it out and it will contribute to your page ranking.

There are instances when alt text needs to be more than a simple visual description of the picture and include its function. For example – a warning sign displaying a picture of a shark shouldn’t be described as “Shark picture”. It needs to be “Shark picture warning sign”. This will further assist Google in evaluating the image and returning the best search results, which will in turn benefit your site’s SEO.

AMAZON AI

Amazon AI encompasses Amazon Web Services (AWS)’s extensive suite of artificial intelligence services and tools designed to enable developers to integrate AI capabilities into their applications with ease. These services cover a wide range of AI functionalities, including natural language processing, computer vision, speech recognition, and machine learning. With offerings such as Amazon Rekognition for image and video analysis, Amazon Polly for text-to-speech conversion, Amazon Lex for building conversational interfaces, and Amazon SageMaker for machine learning model development and deployment, developers can leverage powerful AI technologies without the need for extensive expertise in data science or AI. Amazon AI enables businesses to enhance customer experiences, automate processes, and drive innovation across various industries, while benefiting from AWS’s scalability, reliability, and security infrastructure. If your curious about amazons product algorigthms and how you can do well with your products read more.

ANALYTICS

Where would us SEO specialists and webmasters be without tools like Google Analytics or its many alternatives, like Piwik, KISSmetrics and Woopra – and where do they get these bizarre names?

Website analytics reveal ueful information about site traffic, often in real-time, and are crucial to the development and refinement of effective SEO strategies. Whilst they provide vast amounts of data reporting, interpreting it can be tricky and the learning curve is as steep as the Eiger.

Googler Analytics (undisputed King of the Hill if judged on sheer numbers) divides its functions into three main categories:

- Data Collection & Management

- Data Analysis, Visualisation & Reporting

- Data Activation

These further subdivide into no less than twenty sub-categories that reveal virtually all you need to know about the numbers, demographics, interaction with third-party systems and other online behaviours of your site visitors. Hell – they can probably even tell you their shoe sizes! The reporting is also highly refined and includes a comprehensive range of access controls, management tools and alerts that can be configured by the user.

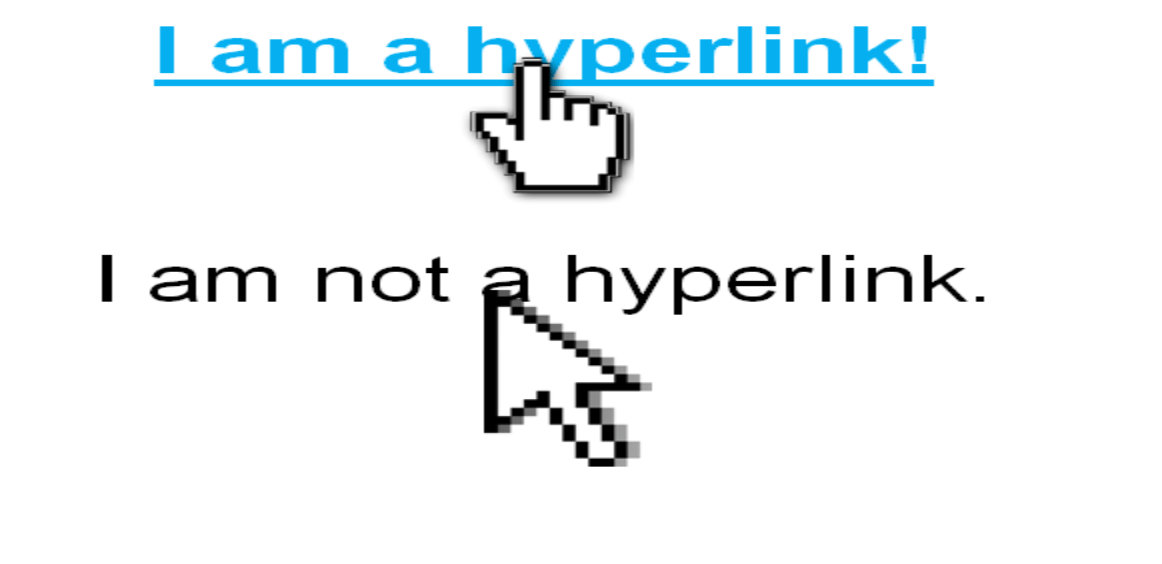

ANCHOR SITE

Anchor text refers to the clickable text on a web page that’s typically written in blue and underlined. Alternatively termed a ‘hyperlink’, once clicked it will automatically direct the visitor to another URL, thanks to a string of hidden computer code anchored behind the link – hence the term, anchor text.

From an SEO perspective, it’s essential that the right keywords are included and that the link is relevant to the subject – we can’t emphasise relevancy enough when it comes to website link-building. Google and other search engines consider anchor text to be an important indicator of page relevance. Just remember to link powerful keywords, rather than less irrelevant words in the text, for added SEO juice.

It also adds weight to your SEO if other sites and blogs decide to incorporate hyperlinks that point to a URL on your site. Inbound links like these are SEO gold-dust! Even internal anchor text pointing at other pages within your site can contribute to its ranking.

ARTICLE SPINNING

Article spinning is an SEO technique commonly employed by webmasters and entails rewriting an original article for inclusion in other websites or similar sources of backlinks. Although spinning copy is one of the many practices that gets Google’s hackles up and therefore considered a ‘black hat’ method (duplication and plagiarism being two of the many things hyper-sensitive Google abhors) it can still be an effective tool if used judiciously. There is even software on the market that can do the content-spinning job for you, instead of having to train monkeys or use copywriters, although the results can be erratic, to say the least, and the monkeys may well do a better job.

It’s not entirely clear to what degree search engine algorithms can detect spun copy and then impose ranking penalties but it’s certainly preferable to manually spin articles using human beings, rather than scrape copy from other sites wholesale and pass it off as original content of your own. That would be tantamount to poking the Google-monster with a sharp stick.

ASTROTURFING

Astroturfing is a nefarious, black hat SEO tactic that is best avoided by webmasters. It works by seeding fake, grassroots support for a campaign or movement across various social media platforms, hence the term astroturf (fake grass). Some internet marketing companies exist purely to promote businesses via this technique, but we believe it’s a far better long-term strategy to seed high quality grass of your own, rather than seek shortcuts.

AUTHORITY

The authority of domains and individual web pages are ranked on a 100-point scale (100 being the best) that aims to predict how strongly they will rank with Google. Authority stands alongside Relevance as one of the twin towers of SEO.

(Contd.)

The authority scale works logarithmically (cue blank expressions!). A real-world example would be: if you join a gym and start bench-pressing 30kg, it’s relatively easy to up the weight from 30 to 40 to 50kg. However, once you move on to heavier weights, it becomes progressively harder to make those jumps. The same is true of authority rankings – it’s easy at first but far harder to grow your score as you creep up the scale towards 100.

Domain authority is currently measured using over 40 ranking signals and below are some of the most dominant: –

- Link building – The number of high-quality websites that are linking to yours and the number you link to your own site. N.B. We said ‘high-quality’ and linking to toxic sites packed with spam or with zero relevance is likely to incur the wrath of all-seeing Google and wreck all your valuable SEO work by attracting harsh penalties. In general, the more links to trusted sites the better.

- Domain age – there’s nothing you can do about the age of your website, but it’s a bad idea to frequently change domain names, because if your position was on page one, it will often take search engines a good few months to catch up and give you such favourable ranking again.

- Keywords in Domains – this is proven to work best if the first word in your domain name is a powerful keyword. Keywords occurring later in the name carry less SEO weight.

- Site quality – this is all about offering great user experience which begins with ensuring that pages load real fast. If they fail to do so visitors will vote with their feet or in this case their mice. Page copy should be well written and relevant. Again, it’s all about providing a great user experience.

- Traffic – this is something that will grow with time if you’re committed to using all the SEO tools in your bag. Search engines will notice sites that have a lot of visitors and view them as having greater trust and authority, ensuring they will inexorably climb up the rankings. How long this takes will depend on the competition.

AUTHORITY SITE

This is the kind of website that everyone should aspire to build. An authority site consistently provides reliable information or opinion that visitors learn to trust and will then bookmark, so they can easily return on a regular basis. Think of these sites as wise uncles that you always turn to for help and advice. They are typically run by recognisable organisations, such as Nine.com, ABC, the Bureau of Meteorology, Wikipedia or Ebay.

Authority sites often feature a great deal of user interaction and their webmasters are adept at integrating social media streams like Twitter, Instagram and Facebook, which is terrific for SEO. What they avoid like the plague is the promotion of spurious affiliate sites and packing their content with distracting spam.

AZURE COGNITIVE SERVICES

Azure Cognitive Services is a suite of cloud-based AI services offered by Microsoft Azure, designed to empower developers with ready-to-use AI capabilities that enhance applications without requiring extensive machine learning expertise. These services cover a broad range of AI functionalities, including vision, speech, language, and decision-making, enabling developers to integrate features like image recognition, text translation, sentiment analysis, and chatbot interactions seamlessly into their applications. Leveraging advanced machine learning algorithms and APIs, Azure Cognitive Services facilitate the creation of intelligent and user-friendly applications across various industries, such as healthcare, finance, retail, and manufacturing. With Microsoft’s commitment to security, scalability, and compliance, Azure Cognitive Services provide developers with the tools needed to build innovative AI-driven solutions while ensuring data privacy and regulatory adherence.

B2B

Business-to-business. When designing a website to appeal to other businesses the content should reflect this aim and possess a corporate feel – known as ‘tone of voice’ in the advertising world. Well written, informative copy will attract commercial visitors, encourage them to remain on your site longer and lead to them returning on a regular basis. All of this builds precious authority which is a vital part of SEO.

B2C

Business-to-consumer. Content should be tailored to your targeted visitors in the same way as B2B. A good SEO writer will understand the mindset of prospects and pitch their copy accordingly by establishing the right tone of voice.

BACKLINKS

Variously known as ‘incoming links’, ‘inlinks’ or ‘citations’, backlinks are essentially referrals from other websites via the clickable, blue hyperlinks we are all familiar with.

It’s impossible to overemphasise the value of high quality (i.e. relevant and authoritative) backlinks to SEO, but we’re going to try anyway! Google and its rival search engines are drawn to web pages with great backlinks like sharks to blood. Or, to mix metaphors, they’re the popular votes that can help win you the election. Google’s bots think, “Hey! Check out all the links to this page, it must be good”, and reward it with improved ranking. A whole niche industry has grown up with the aim of satisfying the demand for inbound links, employing a mixed palette of white, grey and black hat strategies.

A common link generation tactic is to invest in ‘link farms’ – a group of interconnected blogs also known as PBN’s (private blog networks) – but Google got wise to this dubious practice back in 2014 and you now risk incurring a ranking penalty if it detects you, although this doesn’t seem to deter thousands of web developers. It’s up to you to weigh up the risks and rewards.

Remember, not all links in links are created equal, and it’s best to gradually build your own links with authoritative sites that are relevant to your own company or organisation. This can be done in many ways, including the one that Google prefers i.e. wait for other sites to naturally notice yours and build links to it. But this takes aeons – way too long for commercial purposes. Instead, you could try leveraging connections with authority sites that carry a lot of juice – Google Places, Digg, LinkedIn, Foursquare, Squidoo and Yelp are all good examples. You also need to use strong, SEO-friendly anchor text in the visible part of all your links.

Another important point regarding links from social media sites – they can be designated in a source site’s HTML code by its webmaster as ‘do-follow’ or ‘no-follow’. The latter means that links are prohibited from carrying page rank juice to your site, so it’s always worth checking this.

BARD

Bard is an experimental conversational AI service from Google AI, powered by LaMDA. It allows users to have conversations with a large language model, which can be used for a variety of purposes, such as brainstorming ideas, sparking creativity, and accelerating productivity. Bard is still under development, but it has already learned to perform many kinds of tasks, including:

- Generating different creative text formats of text content, like poems, code, scripts, musical pieces, email, letters, etc.

- Translating languages

- Writing different kinds of creative content

- Answering your questions in an informative way

Bard is currently available to a limited number of users, but Google plans to make it more widely available in the future.

Bard is a powerful tool that can be used for a variety of purposes. It is still under development, but it has already learned to perform many kinds of tasks. If you are interested in trying Bard, you can sign up for the waitlist on the Google AI website.

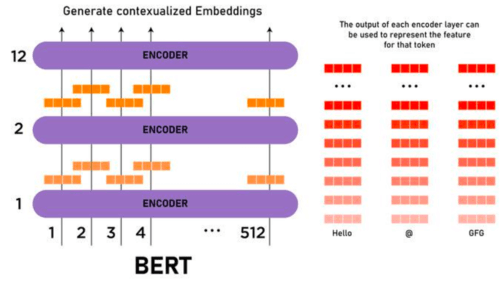

BERT

BERT, short for Bidirectional Encoder Representations from Transformers, stands as a watershed moment in natural language processing (NLP) and machine learning. Developed by Google, BERT represents a breakthrough in understanding the nuances of language by comprehending the context of words within sentences. Unlike its predecessors, BERT can interpret the meaning of a word by considering the words that come before and after it, acknowledging the bidirectional nature of language comprehension. This revolutionary model uses a transformer architecture, allowing it to process words in relation to all the other words in a sentence, thus capturing the complexity of language structure and semantics. BERT’s capability to grasp context has significantly improved search engine results and language-based AI tasks, enhancing the accuracy and relevance of responses to queries across various applications and platforms.

BLACK HAT SEO

Black hat is an umbrella term for any nefarious SEO technique that breaks search engines rules or terms of service by trying to fool them into ranking sites higher, almost always to the detriment of user experience. It’s considered unethical or bad netiquette.

Below are some common black hat strategies that make Google and other search engines blow a gasket.

- Keyword stuffing – one of the main ways (but not the only way) that search engines establish relevancy is by scanning websites for keywords typed into a search enquiry. Crafty web developers will don their black hats and freely distribute these keywords throughout a site’s copy. But this is a trick that most search engines became wise to years ago, and will now penalize once they detect it, usually by reducing the site’s ranking. Worst case scenario is that the offender is totally banned from search results.

- Hidden text – this is a sneaky approach to keyword stuffing that can’t be viewed by site visitors, as the text is buried deep in the computer code. Back in the day it was easy for web developers to employ simple tricks, like disguising text by matching it, chameleon-style, with the background colour. But this and many other old ruses are easily spotted by today’s intuitive search engine spiders and you will pay a high price for your subterfuge!

- Doorway pages – essentially these are fake websites crammed with keywords, key phrases and meta tags, purely aimed at attracting Googlebots and other search engine spiders, rather than genuine traffic, and thus improving rank. Sometimes termed cloaking or redirection pages, they immediately funnel users to a different URL, meaning they are forced to take an annoying additional step in their searches. This is bad SEO.

- Link purchasing – if you still don’t appreciate the vital importance of backlink building to SEO, you’ve clearly not been paying attention! It’s so important that a whole new industry of professional link-sellers has grown up to sell insatiable webmasters all they can consume. Big fish in the link-buying ocean throw a lot of cash at it – tens of thousands of dollars far from uncommon. It’s a highly sophisticated business and link advertisers commonly sell their wares by category, language, domain authority or other groupings. They also buy links themselves, providing a means for site operators to earn passive income. Despite its popularity, link purchasing is regarded by Google as a manipulative, black hat practice. It may work as a short-term strategy but in the long-term it’s seriously risky, because Google is on the case and has the brains to work out which links are bought in and which are ‘natural’.

BLOG

Blogs (short for weblogs) are ubiquitous and now cover every conceivable topic, plus quite a few inconceivable ones – ‘Hungover Owls’ or ‘Food on my Dog’ anyone?

Capricious Google may continuously move the goalposts but the SEO power of blogs remains eternal. One of the reasons for this is the chance to leverage our old friend, the backlink. Done right, there’s plenty of SEO capital to be acquired from including high-quality links in your site’s blog pages and requesting reciprocation by 3rd parties with superior authority. With Google alone indexing close to 50 billion webpages, you need to find any edge you can to get noticed.

Blogs are the perfect approach to long tail internet marketing, because they encourage fresh content through visitor interaction, which serves to enhance a site’s reputation and boost its ranking. Google adores new content, rewarding it with greater SEO weight via its QDF (Query Deserves Freshness) algorithm. So, if you have a blog, remember to update it on a regular basis. If it’s hard to find the time, invite guest bloggers or get employees to contribute.

BOUNCE RATE

If a visitor lands on your page you need to pull out all the stops to encourage them to stay and drill down into your site. If they choose to click away after viewing just one page, it’s known as ‘bouncing’. A high bounce rate (the percentage of visitors who immediately leave) is often an indication of a poorly designed website or one with little or no relevance to the user’s original search query.

So, does bounce rate affect search engine ranking and, if so, how much? Well, the answer is – it’s complicated! There have been numerous geeky SEO experiments conducted that have produced inconclusive results, but the consensus is that Google, for example, would find the necessary bounce rate data hard to acquire and track. Bounce rate taken in isolation also fails to qualify the user experience. For example: if a visitor lands on your ‘Contact Us’ page, acquires the phone number they need then leaves, this would be a positive outcome and it would be wrong for a search engine to penalise it as a bounce. Equally, the time someone spends on a certain page indicates very little. If they’re on a page for half-an-hour they could be transfixed by your Shakespearean prose, distracted by a conversation or simply asleep. Having said all this, it’s good SEO practice to build alluring webpages that encourage visitors to click on links. You don’t want them bouncing simply because your site looks cheap and tacky.

BREADCRUMBS

The larger your site, the more important it is to incorporate breadcrumbs, which facilitate easy navigation, improve user experience and help lower bounce rate. Breadcrumbs are the trail of page links that you often find near the top of a webpage (not to be confused with primary navigation tabs or used as replacements for back or forward buttons). They let users know precisely where they are in a site’s hierarchy. Not only are breadcrumbs useful to us humans, they also help search engines to understand website structure – great for SEO! Google spiders and their rival crawlers have a real taste for breadcrumbs, because they work logically and make it easy for them to establish how the pages relate to one another. They further contribute to optimisation in offering a chance to add keywords or concise key phrases. As always, the trick is not to over-egg the pudding and risk incurring search engine penalties.

Breadcrumbs come in three main types:

- Location breadcrumbs – show you exactly where you are in the site hierarchy

- Path breadcrumbs – show you how you got there

- Keyword breadcrumbs – use keywords instead of page titles to indicate location

CANONICAL ISSUES

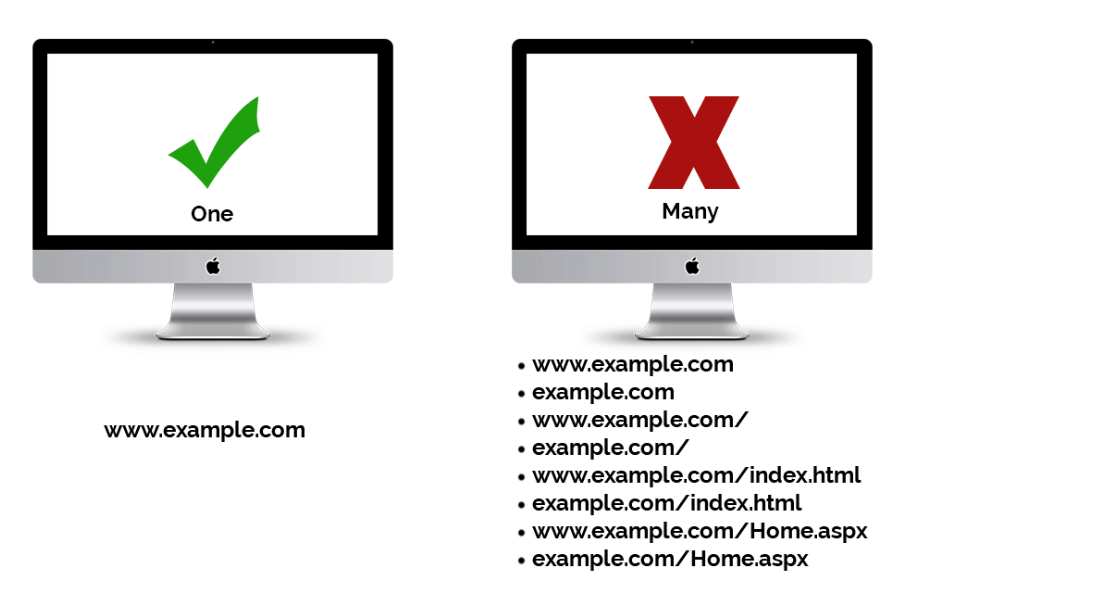

Canonical issues or canonicalization (don’t even try to pronounce it) both refer to single web pages accessed via different URL’s (Uniform Resource Locators). It might help to imagine a room with several doors, each marked by a slightly different sign. Confusing? Well many search engines would tend to agree and have a tough time deciding which is the best to point the user to.

Web spiders aren’t good at riddles, so it’s best not to confuse them by having several URL’s, as in those below that link to the same homepage:

realconfusion.com

realconfusion.com

realconfusion.com/index.php

realconfusion.com.au

realconfusion.com.au/index.php

The problem is compounded when ‘session IDs’ are added to the equation, which generate a unique URL for every visit e.g. http://realconfusion.com/localhost88page8.aspx. At this point the spiders will be tearing their own legs off! Webmasters often use session IDs for depth-tracking purposes but a cookie-based approach would be just as effective and far kinder to our arachnid friends.

Why is all this so important? Well, it all comes down to ‘link authority’. Having a multitude of URL’s has the effect of diluting this authority. Powerful link juice becomes an insipid concoction, like watered down whiskey. Think of the ‘canon’ as the official page and anything else as duplication – and you understand by now how much Google and its competitors frown duplication.

There are various fixes available for canonical issues, including Google’s ‘canonical tagging’ that allows you to indicate in the HTML header that the URL is a copy. Plus, there’s always our old, spider-friendly ‘301 redirect’ to fall back on.

CHAT-GPT

Chat-GPT is an artificial intelligence (AI) model developed by OpenAI that excels in natural language processing tasks, particularly in conversational contexts. It’s designed to understand and generate human-like text based on the input it receives. Essentially, Chat-GPT can engage in dialogue, answer questions, provide information, and even simulate conversation with users, mimicking human conversation patterns and responses.

At its core, Chat-GPT leverages a technology called Generative Pre-trained Transformer (GPT), which is a type of neural network architecture. This architecture enables the model to learn patterns, structures, and meanings from vast amounts of text data it’s trained on. By exposing itself to diverse sources of human language, including books, articles, websites, and more, Chat-GPT becomes proficient in understanding and generating text across various topics and contexts.

With its ability to comprehend and produce human-like responses, Chat-GPT has a wide range of applications, including virtual assistants, customer service chatbots, language translation tools, educational resources, and more. Its versatility and adaptability make it a powerful tool for automating communication tasks and enhancing user experiences in numerous domains.

CLICK FRAUD

In the world of pay-per-click (PPC) advertising, click fraud is a major issue. It refers to various methods of generating illegitimate clicks that, in 2014, was estimated to have created an eye-watering $11 billion in advertising revenue worldwide. It can be done manually by teams of humans or by using specially designed software that repeatedly clicks on banner ads or paid links.

So, who commits click fraud? There are two main types:

Competitive – this is a malicious act, perpetrated by direct competitors with the aim of seriously depleting your advertising budget. The more clicks, the more you pay, even though zero revenue is generated. In a worst-case scenario, you might even see all this interest as a sign of a sales boom, start hiring more staff to cope or buy in more stock.

The problem is compounded when ‘session IDs’ are added to the equation, which generate a unique URL for every visit e.g. http://realconfusion.com/localhost88page8.aspx. At this point the spiders will be tearing their own legs off! Webmasters often use session IDs for depth-tracking purposes but a cookie-based approach would be just as effective and far kinder to our arachnid friends.

Affiliate – this is a black hat technique, usually employed by greedy site owners or publishers, in an effort to increase their own PPC revenue. They can use bots or ‘click farms’ to click on ads on their own sites and squeeze as much income as possible out of them.

Increasingly aware of the problem, PPC advertisers have put a lot of pressure on companies like Google and Yahoo to sort out their houses. All the main search engines have responded by creating taskforces to identify and punish click fraudsters. They’ve also introduced detection algorithms that work in real-time. The alternative would’ve been a huge loss of consumer confidence in the value of advertising platforms such as Adwords and Bing Ads.

CLICK FRAUD COUNTER-MEASURES

Vigilance is everything – if you notice a sudden spike in your PPC figures, it could be a sign that something fishy is going on. In this case, paranoia is good! Don’t simply rely on the search engines’ own powers of detection – after all, clicks earn them money whether legitimate or fraudulent.

Through your own in-house reporting, you need to keep close tabs on the following data:

- IP address

- Click timestamp

- Action timestamp

- User agent

These will help you identify patterns in regular visitors who click on your banner ads or paid links but rarely convert into actions. You can then search the IP address to find out if the clicks are genuine or potentially malicious or fraudulent.

CLOAKING

Cloaking is yet another nefarious, black hat SEO practice that you need to avoid or risk termination with extreme prejudice by Google and its fellow search engines. Cloaking ruins the user experience by producing a result that doesn’t match the original enquiry or, as Google puts it: “Serving up different results on user agent may cause your site to be perceived as deceptive and removed from the Google index”. The goal of cloaking is usually to boost a website’s ranking for specific keywords. Back in the old days, it was common to click on a site promising, for example, cinema reviews, only to be presented with a hardcore porn page. Avoid cloaking at all costs. It’s just not worth the risk.

CONTENT MANAGEMENT SYSTEM (CMS)

Typically, websites are built by techies (identifiable by their geek chic clothing and often found sipping turmeric lattes in offices filled with beanbags) who are familiar with programming languages, like PHP, Python, Java and HTML. That’s great for those with the time and technical nous, but what if you have a site and need to upload fresh images or make a new blog entry? CMS software allows you to do so without having to give your hard-earned cash to a geek. When commissioning a new website, it’s wise to consider the long-term cost advantages of having a CMS built in.

There’s a huge range of CMS platforms, including WordPress (probably the most commonly used), Drupal, Umbraco and Magento. All are designed to help you easily upload, edit and manage content. Most also work with multiple users, who can be issued passwords with limited permissions, so that they can make changes in real-time. For example, travel agency staff could update pages with new holiday offers or sports journalists could post their football reports online.

In terms of SEO, the different CMS platforms vary greatly and there is an ongoing debate among experts over which is the most effective. At the very least, you need to be asking – will the CMS program feature the following?

- Straightforward reading by search engines – metatag and title generation

- Easy keyword optimisation

- Easy sharing to social media platforms

- Easy tracking

- Great user experience – fast loading times

- Supports HTML headers

- Enables breadcrumbs

- Manages backlinks

- Provides keyword suggestions and shows keyword density

CODE-SWAPPING (Bait-and-Switch)

This is another black hat strategy used by unscrupulous webmasters, who create highly SEO’d web pages known as ‘doorway pages’, wait for them to achieve high page ranking, only to switch the content at a later date. You might also see them referred to as gateway, zebra, portal, jump or bridge pages. They work by using fast ‘meta refresh’ commands but the practice will be penalized by most search engines if they detect it.

COMMENT SPAM

Blogs and comment boxes are terrific for SEO – lots of fresh, on-point content that Google and other search engines are likely to reward with increased page ranking. However, the downside is that popular blogs can also be leveraged by crafty third-parties looking to build their own inlinks by posting spam blog comments. Why is this a problem? One of the reasons is that paranoid search engines may suspect your site is profiting in some way from these links and penalize its ranking.

Irrelevant comments also wreck the user experience and can often lead to visitors clicking away. So, how do you fight back? Here are a few simple steps that a webmaster can take:

- Switch on comment moderation

- Disallow all anonymous blog posting

- Use CAPTCHAS to prevent automated spamming by computers

- Employ the ‘nofollow’ tag in your HTML code to stop search engines counting links

- Disallow all hyperlinks in blog posts

- Require registration to comment

- Use anti-spam software that works in real-time e.g. Akismet or Sift Science

- Run through comments and delete those you think are suspicious

COMPUTER VISION

Computer Vision is a field of artificial intelligence focused on enabling computers to interpret and understand visual information from the real world. It encompasses a variety of tasks, including image recognition, object detection, image segmentation, and scene understanding. Computer Vision algorithms analyze digital images or videos, extracting features and patterns to make sense of the visual content. Techniques such as convolutional neural networks (CNNs), deep learning, and image processing are commonly used in Computer Vision systems. Applications of Computer Vision span across numerous domains, including autonomous vehicles, medical imaging, surveillance, augmented reality, and robotics. By providing machines with the ability to perceive and comprehend visual data, Computer Vision plays a crucial role in enabling automation, enhancing human-computer interaction, and advancing technology in various industries.

CONTENT

If you’ve spent any time at all worshipping at the temple of SEO, you can’t fail to have heard the mantra, “Content is king!”, intoned by countless high priests. Sorry, but you’re about to hear us join in the chorus, because great content remains the puppy’s nuts and the cat’s pyjamas, so don’t let anyone persuade you otherwise.

So, what makes for great content? For a start, it needs to be the opposite of everything Google despises and punishes, which includes plagiarism, keyword-stuffing, duplication, irrelevance and hidden text.

Instead, you need to present your visitors with completely original, well-written copy (text) that’s bang on topic, interspersed with powerful keywords and packed with useful information and hot topics. If you can add complementary images and video to the mix, all the better.

The aim is to satisfy the increasingly demanding search engine spiders, while encouraging your visitors to come back for more. In the long-run you hope to build a trusted, appealing site with bags of authority – the Morgan Freeman of websites!

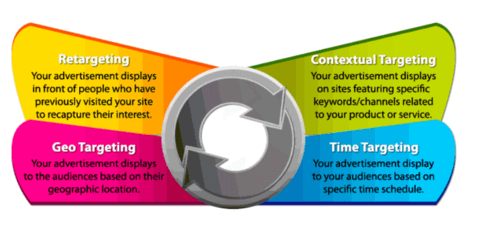

CONTEXTUAL ADVERTISEMENTS

The main point about contextual advertising and SEO is that the ads need to be relevant and targeted. You don’t want to land on a football website only to be confronted by banner ads for women’s hair products, or vice versa. Although, given the vanity of many male footballers this may not prove wholly irrelevant! Search engines are continually refining their search algorithms, to improve the user experience. A webmaster worth his salt won’t overburden a page with ads, particularly those that make it ‘top heavy’, meaning the visitor must scroll down a long way to find the information they came for. Plus, it makes the website look cheap and nasty.

Contextual banner and pop-up ads normally use keywords for targeting purposes. Advertisers need to register with one of the many pay-per-click (PPC) services. These include Google Adwords, Media.net, LookSmart, Pulse 360 and AOL Advertising.

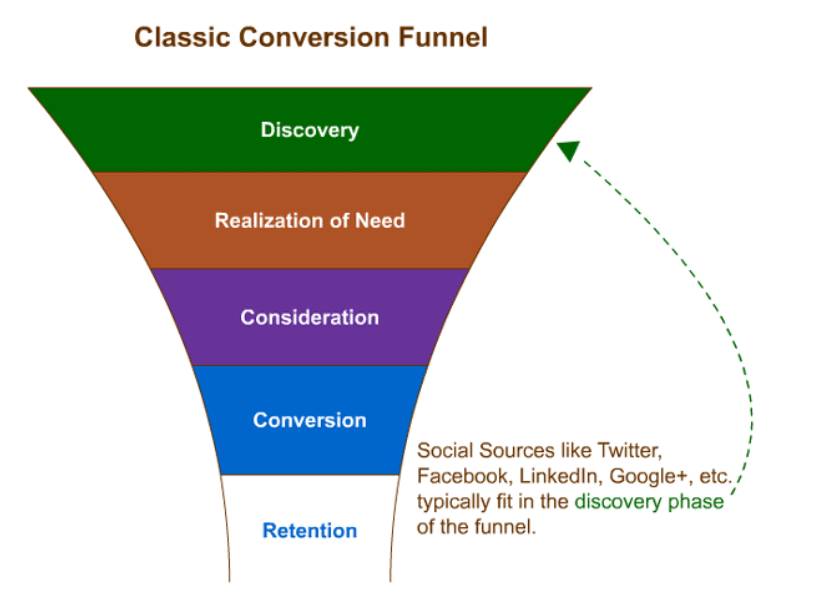

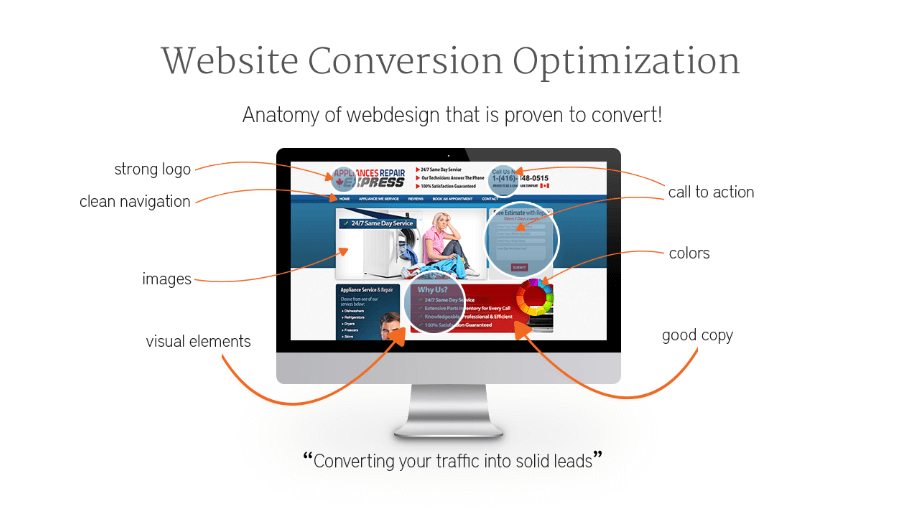

CONVERSION

Having a classy, professionally-built website that you can show off to friends and clients is all well and good, but the ultimate goal of commercial websites is to elicit some sort of action or response from visitors. This is known in the online marketing world as ‘conversion’ and is typically measured in advertisement clicks, sign-ups and sales. An effective website should have a prominent ‘call to action’ on every page – if a potential customer is forced to drill down into a site just to find your phone number, he will vote with his mouse and probably click away to a competitor. Read more here..

CONVERSION RATES

For our purposes, conversion rate is the percentage of website visitors who complete a ‘call-to-action’, such as filling in an online enquiry form, calling an order line or sending an email. If you get your SEO right, your site should attract qualified traffic and increase its conversion rate. Read more here…

COPILOT (Github)

GitHub Copilot is your AI coding assistant, acting as a virtual pair programmer within your development environment. It analyzes your code and context, suggesting relevant completions, functions, and even entire lines of code to accelerate your development process. Unlike simple auto-completion tools, Copilot leverages advanced AI to understand your intent and generate code tailored to your specific needs, boosting your productivity and streamlining the coding experience.

COPY.ai

Copy.ai is a platform powered by artificial intelligence (AI) that assists in generating marketing copy, sales content, and creative text formats. It caters to various needs, from crafting social media posts and ad copy to building email sequences and blog outlines. Copy.ai aims to streamline the content creation process by offering pre-built templates and AI suggestions, saving you time and potentially sparking creative ideas for your marketing and communication endeavors.

COVARIENT

Covariant is a leading artificial intelligence (AI) robotics company dedicated to revolutionizing warehouse and logistics operations. At the core of Covariant’s technology is the “Covariant Brain,” a sophisticated AI platform designed to empower robots with exceptional vision and problem-solving abilities. This allows robots to autonomously and reliably handle diverse tasks like picking, sorting, and packing a wide array of items. Covariant’s solutions streamline operations, reduce labor costs, and increase efficiency, helping businesses meet the ever-growing demands of e-commerce and supply chain logistics.

Covariant Brain is the heart and mind of Covariant’s robots. It’s an AI platform specifically designed for robotic tasks in warehouses and logistics. Here are its key features:

- Pre-trained: Unlike many AI systems, the Covariant Brain is pre-trained on millions of real-world picking scenarios from Covariant robots around the globe. This “out-of-the-box” knowledge allows robots to handle diverse items without extensive human programming.

- Versatility: The Brain can pick virtually any item, regardless of size, shape, or packaging, making it adaptable to various warehouse tasks.

- Continuous Learning: It doesn’t stop learning after initial training. The Brain continuously improves through “fleet learning,” where all connected robots share their experiences, allowing the entire network to adapt and become more efficient.

- Autonomous Picking: The Brain empowers robots to “see, think, and act” on their own, meaning they can pick items, handle unexpected situations, and even recover from minor errors without constant human intervention.

Overall, the Covariant Brain is a powerful AI system that transforms robots into intelligent and adaptable workers, boosting efficiency and flexibility in warehouse and logistics operations.

CPC – COST PER CLICK

Cost per click (CPC) is the price you end up paying the host of your PPC (pay per click) advertising campaign. Before you get all excited and rush into setting up an account with Google AdWords, Bing, Yahoo or one of the many other PPC platforms out there, you need to figure out how much you can afford. Monthly budgets can range from $50 to $500,000 or even beyond for the big-hitters. Cost per click currently averages around $2 with Google AdWords but can be as high as $50 in highly competitive industries, like law and insurance, filled with big players.

Most hosts operate a bidding system and costs vary depending on several factors, including the popularity of the keywords or key phrases you choose to bid on and the amount of traffic hitting the site. It’s good practice not to focus on one powerful yet expensive keyword, but spread your net wider to maximise potential and make your budget go further. Some hosts help steady the nerves of financial controllers by allowing customers to put a cap on their spending.

CPM – Cost Per Thousand Impressions

CPM is another way that pay per click advertising platforms calculate your costs and their revenue (if you’re wondering where the ‘M’ comes from, it’s the Roman numeral for 1,000). CPM differs from CPC, in that it counts impressions i.e. the appearance of an ad on a search engine results page. If your CPM is $2 you’ll be charged $2 every 1,000 times your ad is viewed. CPM is a good way to raise brand awareness.

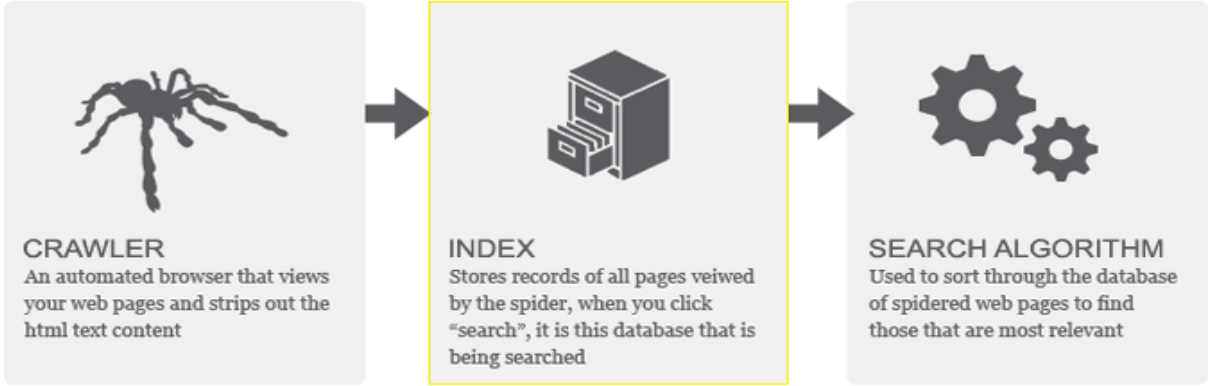

CRAWLER

Many geeks are arachnophobic, but if you’re a web developer, a big part of your job is to attract as many web spiders or ‘crawlers’ to your site as possible. But fear not; the spiders of which we speak are simply software programs designed to crawl around the worldwide web, gathering data as they go.

Web crawlers or internet bots are typically used to make copies of web pages and send them information back to search engine HQ for processing. This data can then be used to assess the authority of a web page and determine its subsequent ranking. A spider usually begins its journey across the web by referring to lists of links found on huge servers. It will then go hunting for specific keywords/phrases and evaluate the context in which it finds them. The clever spiders don’t only take note of the words they encounter, they also understand that their placement in prime locations – heading, initial sentences, metatags – makes them more important. This explains why powerful keywords and great site architecture are so important to SEO.

Web developers can set rules for web crawlers by inserting ‘robot.txt’ code into their servers, commanding them to ignore specific site pages, for example, or not index them. This is often used if a site is under construction and not ready to go live. If all goes to plan, the spiders should diligently read this file on arrival and act accordingly.

DALLE-2

DALL·E 2, introduced by OpenAI in 2022, is an advanced generative model that builds upon the original DALL·E model, which was renowned for its ability to generate images from textual descriptions. DALL·E 2 extends this capability by allowing users to generate images from both textual and image prompts, providing greater flexibility and creative control. Leveraging a combination of techniques such as hierarchical representation learning and transformer-based architecture, DALL·E 2 can generate high-quality images with intricate details and diverse styles. With applications in various fields such as art, design, and content creation, DALL·E 2 represents a significant advancement in AI-driven image generation, enabling users to effortlessly translate their ideas and concepts into visual form.

DEDICATED SERVER

You can choose to host your website via a dedicated or shared server that might be home to thousands of other sites. You can also pay extra for a unique IP address. The debate over the pros and cons has rumbled on for over a decade. It’s logical that a dedicated sever with more allocated RAM and bandwidth, plus greater processing power, will be faster and the site will load and respond quicker. But there are so many other variables to consider. It’s also a question of cost. A new startup company might struggle to stretch their budget to a dedicated, managed server of their own.

DEEP LINK

Deep links are great for SEO because they direct the user straight to a specific webpage, rather than just stranding them on a homepage. This means the page in question will naturally build more authority and the website’s backlink portfolio will become more diversified. Both great for site ranking. It also leads to an effortless user experience which often translates into improved conversion rates. There’s also a real buzz about deep linking in the world of mobile apps, which leverages the use of URIs (uniform resource identifiers) to offer a richer experience and increased engagement.

DEEP MIND

DeepMind is a world-renowned artificial intelligence research lab acquired by Google in 2014, known for its groundbreaking contributions to AI and machine learning. Founded in 2010, DeepMind focuses on developing algorithms and systems that can learn to solve complex problems and make decisions in a manner akin to human intelligence. Their achievements span various domains, including games like Go and chess, healthcare applications such as disease diagnosis and drug discovery, and energy efficiency optimization in data centres. DeepMind’s flagship project, AlphaGo, made headlines in 2016 by defeating the world champion Go player, marking a significant milestone in AI advancement. Committed to ethical AI development, DeepMind emphasises transparency, safety, and societal impact in its research endeavours, striving to harness AI for the betterment of humanity while addressing potential risks and challenges.

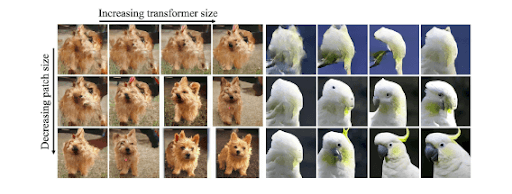

DIFFUSION TRANSFORMER

The Diffusion Transformer is a novel variant of the Transformer architecture, a widely used model in natural language processing and other AI tasks. Introduced in 2021 by OpenAI, the Diffusion Transformer incorporates ideas from diffusion probabilistic models to improve sample quality and efficiency. It utilizes a diffusion process during generation, gradually refining noisy inputs into high-quality outputs. This approach allows for more coherent and diverse generations compared to traditional transformers. The Diffusion Transformer has shown promising results in various tasks, including text generation, image synthesis, and unsupervised learning. Its innovative design represents a significant step forward in generative modeling, offering new opportunities for AI research and applications.

DIRECTORY

Back in the prehistoric era, before 1998, Google and its ubiquitous spiders were yet to evolve. You’re probably wondering how the poor cave-dwellers survived without the Big G in the sky. Well, they had to rely on rudimentary systems known as directories or listings directories.

Editors working on platforms like Yahoo! Directory and DMOZ did a great job compiling and assessing submissions to these massive lists, laying them out in user-friendly categories and sub-categories. In fact, while Yahoo! Directory has since evolved into a search engine, DMOZ survives as an open-content resource maintained by teams of volunteer editors (yes, we’re also wondering what kind of person volunteers to sort data).

Long gone are the days when web developers submitted their sites to every directory they could find, to build links and improve ranking. This practice was once encouraged by Google but it turned out to be a giant mistake that created a virtual gold rush. Eventually bosses decided that enough was enough and started to devalue links or even purge directories.

Today directory submission can still form part of your SEO tool-kit, provided you use a reputable one i.e. one that uses proper moderators and a strict approval process. Best to opt for well-known names like Yellow Pages and True Local or others that charge an inclusion fee, such as Business.com.au or Flyingsolo.com.au. You should also investigate niche directories to match your specific industry.

DISAVOW BACKLINKS

As previously discussed, backlinks can be good or bad (toxic). It’s all about following Google’s quality guidelines by avoiding or taking down low-quality, irrelevant links that will damage your ranking. But first they need to be identified and tracked. In Google, a handy list can be downloaded using Webmaster Tools. Bing also offers its own version, as do other companies like Moz and Ahrefs.

The next stage is time-consuming, as it involves a lengthy evaluation process, during which you need to establish the quality of each link. It’s important not to throw the baby out with the bath water! You need to look out for cheap looking, spammy or low relevance sites.

Finally, the owners of the offending websites must be contacted by email and asked to either remove them or add a “rel=no follow” instruction to the link tag. This is called ‘link pruning’. If repeated requests are ignored you need to advance to DEFCON 1 and send a disavowal request to Bing or Google, telling them to disregard the links.

DOMAIN NAME

A domain name is a website’s unique address. It will typically include your brand name, service or product description. Google got wise to the practice of stuffing keywords into domain names and announced its EMD (Exact Match Domain) update in 2012, effectively snatching a useful SEO tool from the hands of disgruntled webmasters. Today it’s best to see your domain name as great way to establish your brand by sending out signals. Google will eventually notice, assume your site has genuine authority and reward it with higher ranking (all things being equal). Internet giants like Twitter, Reddit, Facebook and Amazon prove that keywords in domains aren’t really that important. People remember brands not descriptions.

It’s best not to use hyphens, numbers and intentionally misspelled words in your domain, as searchers may struggle to recall them or will likely mistype them.

When it comes to choosing a domain name extension (top-level domain) ‘.com’ remains the most desirable and carries the most SEO weight. Others, such as ‘.biz’ have undesirable connotations with low quality spam sites and are best avoided where possible.

DOORWAY PAGE

Otherwise known as a ‘gateway pages’, a doorway pages are purely designed by web developers to rank highly for specific search queries. They are an SEO tactic, considered grey hat at best, and frowned upon by Google for its tendency to result in a poor user experience.

Google’s Penguin algorithm might not sound intimidating but it can cause havoc with your page ranking. Even the mighty eBay has fallen foul of the pernicious Penguin and thus lost traffic. If you’ve ever used shopping comparison sites you’ve probably landed on a doorway page, only to find the site doesn’t carry the product you searched for. Do not confuse them with legitimate landing pages which should precisely match your search by being properly optimised.

To survive the dreaded Penguin’s interrogation and prove that a landing page is not in fact a doorway page, webmasters can take various measures.

- Ensure there are plenty of internal (deep) links

- Avoid duplication

- Do not publish pages with zero content

- Use redirects if necessary

DUPLICATE CONTENT

Throughout these pages, we’ve taken pains to drive home the message that duplication is one of the fastest ways to incur the wrath of Google and other search engines, potentially resulting in heavy penalties and/or traffic loss. When similar or identical content appears in multiple locations (URLs), the internet spiders struggle to work out which is the original or most relevant to index, unless webmasters take specific measures to help them out.

Certain practices can easily be mistaken for black hat SEO, by innocently generating duplicate pages, or your site might even be the victim of 3rd party actions. Here are some of the potential causes of duplication:

- Original and printer-friendly versions of the same page

- Store items accessed via different URLs

- Session IDs

- Scraping and content syndication

- Comment pagination in WordPress

- WWW or non-WWW versions

- Boilerplate repetition

- Misguided use of CMS

It’s a webmaster or SEO consultant’s job to fix these issues, before they become serious problems, compounded by bloggers and other users linking to a plethora of different URLs. Google Webmaster Tools can help web developers identify duplicate content, so they can employ 301 redirects, canonicalization and other methods to remedy the situation.

E-E-A-T

E-A-T, an acronym for Expertise, Authoritativeness, and Trustworthiness, stands as a critical framework within the realms of content creation, especially in the context of Google’s search quality guidelines. This framework is pivotal in evaluating the quality and reliability of online content.

Expertise pertains to the level of knowledge and skill demonstrated in producing content within a particular field or topic. It requires not just surface-level understanding but a depth of knowledge that adds genuine value and insight to the audience. Authoritativeness delves into the credibility and reputation of the content creator or the website itself. Factors such as credentials, experience, and endorsements contribute to establishing authority. Lastly, Trustworthiness revolves around the reliability and transparency of the information presented. It involves factors like accuracy, citing credible sources, and providing transparent and honest content that users can rely on.

For content creators and businesses aiming to thrive online, adhering to E-A-T principles is paramount. Upholding high standards of expertise, authoritativeness, and trustworthiness not only elevates content visibility in search results but also fosters a loyal and engaged audience base by offering reliable and valuable information. It’s not merely about meeting search engine criteria; it’s about building a foundation of credibility and trust with the audience, ultimately driving sustained success in the digital landscape.

E-COMMERCE SITE

E-Commerce sites exist to drive retail sales, Amazon and eBay being prime examples. The value of Australian e-Commerce is predicted to top an eye-watering $32 million by the end of 2017. That number represents massive site competition which equals a huge SEO challenge for webmasters.

The temptation is often to populate e-commerce sites as quickly as possible, especially with larger sites, but it pays long-tail dividends further down the line if you take the time to optimise each page and pay more than cursory attention to product descriptions. This includes avoiding duplication by lazily lifting copy from manufacturers’ own sites – remember, Google hates duplication. Lengthy descriptions are search engine-friendly and are also more likely to convert to sales, so resists the temptation to cut corners!

EDITORIAL LINKS

Editorial links aren’t bought or actively solicited, but occur naturally. To web developers they stand alongside keywords and content to form SEO’s Holy Trinity.

If you want to attract high quality links you must ensure your site is of equal quality. It should feature a popular, regularly updated blog and be packed with original, unique content. Equally, it’s a good idea to immerse yourself in the online community and comment on other people’s blogs, particularly those related to your own industry. Another great way to build editorial links is to write original articles about your industry or area of expertise.

EMD

EMD, or Exact Match Domains, refer to website domain names that precisely match the searched keyword or phrase. In the past, having an EMD was considered advantageous for search engine optimization (SEO) purposes, as it was believed to provide a ranking boost for the exact match keyword. For instance, if someone searched for “bestshoes.com,” and the website with that domain name existed, it was thought to have an edge in ranking for the keyword “best shoes.”

However, over time, search engine algorithms, especially Google’s, have evolved significantly. The emphasis on EMDs has diminished due to algorithm updates that focus more on content quality, relevance, and user experience rather than just the exact match domain. While having an EMD might offer some initial advantage in terms of keyword association, it does not guarantee higher rankings or traffic on its own. Factors such as content quality, backlinks, user engagement, and overall website authority now carry more weight in determining search engine rankings.

Today, SEO strategies focus more on providing valuable, relevant content and building a comprehensive online presence rather than solely relying on having an exact match domain. While EMDs might still hold some value in terms of branding and user recognition, their impact on search rankings has significantly diminished in the face of more complex and sophisticated search engine algorithms.

FEEDS

Web feeds are subscribed to by users and provide a continuous flow of regularly updated news or other data. The two principal feed formats are ‘RSS’ (rich site summary) and ‘Atom’. Because news feeds are syndicated around the web, they obviously don’t provide unique content, which means they won’t improve SEO directly. However, they can contribute in a secondary way by helping to establish your website as the place to go for up to date information, which should increase traffic, keep visitors onsite longer and bolster ranking.

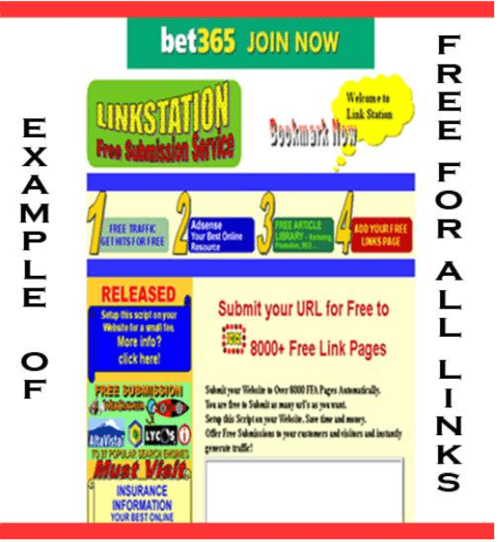

FFA (Free For All)

Anyone can link to FFA sites at no cost, unless you count the potentially high price you pay for plummeting page ranking! They invariably have a cheap, spammy feel and are crammed to bursting with banner ads and pop-ups. They are little more than link farms and to be avoided at all costs. If you want links it’s always worth spending time building the highest quality.

FRAMES

Frames are webpage designs that display two or more pages in rows or columns on a single screen, often resulting in a poor user experience and utter bafflement for easily confused search engine spiders. The greater the number of frames, the greater the likelihood that the site will achieve poor ranking or fail to be indexed at all.

Other drawbacks are that frames may not display correctly on small screens, mobile phones or tablets. Some browsers don’t support frame technology at all. There are also reports of the ‘back’ button not working on frame pages. All things considered – it’s best to stay away.

FTP (File Transfer Protocol)

File Transfer Protocol is a standard internet procedure for transmitting files between computers or uploading and downloading them to and from servers. This is also known by techies as transferring between client and server. An SEO specialist will require access to FTP via a password protected log-in before starting work.

GANs

GANs, short for Generative Adversarial Networks, are a class of machine learning models that excel in generating realistic synthetic data. Consisting of two neural networks, a generator and a discriminator, GANs operate in a competitive setting where the generator aims to create data resembling the real examples, while the discriminator aims to differentiate between real and fake data. Through adversarial training, GANs iteratively improve both the generator’s ability to produce realistic data and the discriminator’s ability to distinguish between real and fake data. This results in the generation of highly realistic images, text, audio, and more, with applications ranging from image synthesis and style transfer to data augmentation and anomaly detection. GANs represent a powerful tool in the realm of artificial intelligence, driving advancements in creative content generation and data synthesis.

GIZMO

Alternatively known as ‘widgets’ or ‘gadgets’, these are small software applications that appear on webpages. Common examples are hit counters, clocks, calendars and weather forecasts, but there are literally thousands of different gizmos out there. They can support your SEO by providing useful click bait but it’s imperative that you don’t overload a page with them and destroy its aesthetics. Ask the question: Do they add to the user experience? If the answer is “no”, ditch them.

GOOGLE AI

Google AI represents Google’s expansive efforts in artificial intelligence research, development, and application across its wide array of products and services. Established as a dedicated division within Google, Google AI is at the forefront of pioneering AI technologies that power features like voice recognition, image recognition, natural language understanding, and recommendation systems across platforms such as Google Search, Gmail, Google Photos, and Google Assistant. With a focus on advancing the state-of-the-art in machine learning, Google AI conducts research in various fields, including computer vision, speech recognition, and reinforcement learning, leading to breakthroughs like the Transformer architecture and BERT language models. Moreover, Google AI engages in interdisciplinary collaborations with academic institutions and industry partners to address complex challenges and promote the responsible and ethical deployment of AI technologies globally.

GOOGLE BOMBING

Much loved by satirists and other mischief-makers looking to create SERPs havoc, a Google bomb is created when search results are altered using nefarious means, such as building a huge number of links.

The most infamous example exploded way back in 2004, when George W Bush was the luckless target. A Google search for the term, ‘miserable failure’, resulted in the user being directed to the Bush biography on the White House website. The search engine giant has long since tweaked its algorithms to make bombing a lot more difficult to achieve, but it was fun while it lasted!

GOOGLE BOWLING

This may sound like fun bit it’s actually the term for reverse SEO and falls firmly into the black hat category. Essentially, Google bowling is the underhand practice of building toxic backlinks to a competitor’s website, with the intention of attracting penalties and damaging their page ranking. Profoundly evil webmasters have even been known to build links that redirect to viruses. A subtler approach is to report a competitor to Google’s WebSpam Team, by filing a spam report, if you suspect they’re buying in links. If you’re tempted to indulge in Google bowling, just remember that ever-vigilant Google is constantly searching the web for suspicious link patterns and you could end up sabotaging your own site.

So, what does a webmaster do to protect his site? First, they need to identify any low quality or unnatural links, using one of the many third-party tools available or by manually investigating the sites. Next, they need to contact Google and report them via their Disavow Links facility. This will ensure that the links aren’t taken into consideration and your page rank will remain unaffected.

GOOGLE DANCE

No, it’s not the annual jamboree for Google geeks, but something that drives webmasters and SEO professionals to distraction – the dreaded database and algorithm updates that Google imposes all too frequently, and their impact on SERPs.

Updates aren’t as disturbing as in the past (Google now does them more regularly) but can still result in wild ranking fluctuations, as the vast cache of pages is reconfigured. The advice for webmasters is – don’t panic! The temptation is to start trying to recoup any losses by throwing everything in your SEO toolbox at the problem, but it’s best to wait a few days and see how things pan out. If pages suffer a long-term fall, you can start introducing rescue measures.

GOOGLE LINK JUICE

As mentioned previously, link juice is the lifeblood of SEO. Google sees links as votes or recommendations for your site. The higher the quality of the link, the greater its positive SEO weight and the more elevated your pagerank, particularly when combined with superb content.

Googlebots automatically assess the links on every web page, to come up with an overall domain rank. Many webmasters are tempted to overload their homepages with links, whilst neglecting the rest of the site. This is a bit like showing off in a flashy sports car with a tiny engine. A more effective approach is to sprinkle high quality links across all pages in a process known as ‘deep linking’. That will make them easy for spiders to find and rank accordingly. This is even more crucial since Google launched its Panda update, which actively penalizes link-heavy homepages. And don’t forget – link juice can also be channelled internally. Check which pages on your site are getting a lot of traffic and high quality inbound juice, then simply link them to your underperforming pages.

Certain pages will never rank highly, despite your best efforts, so don’t waste valuable link juice on them. We’re talking about Contact, Pricing and About Us pages, in particular. You can easily discourage the attention of spiders by adding robot and robot.txt tags to the top-level directory and using nofollow tags.

GOOGLEBOT

Googlebot is the program Google uses to trawl through the web’s pages, discovering new and updated pages. Otherwise known as spiders, the bots get through an unimaginable amount of legwork covering the billions of pages the worldwide web is now comprised of. They do this by first accessing lists of URL’s and sitemaps, and then painstakingly following links from page to page.

Occasionally crawl errors can prevent pages from appearing in SERPs, so a webmaster needs to check this on a regular basis, by going to Google’s ‘Crawl Errors Report’ page. Here you’ll find a handy list of site and URL errors over the past 90 days that you can then rectify.

There may be times when you don’t want a page indexing (perhaps you’re doing work on it or it includes private content) and there are several means available to prevent it (see Google Juice).

GPT-3